Case-Study: Performance-porting of GPU application from OpenCL to Metal

Problem

Our client had a remarkably high-quality GPU implementation of their unique algorithm, yet it was optimised for mainly for AMD and NVIDIA hardware. Although their software was functioning on Apple devices, the performance was deemed sub-optimal. Given creators often prefer Apple as their choice of hardware, this was a high business value problem for our client.

Solution

We developed a reasonably generic and flexible framework that would cover the subset of functionality used by OpenCL. We mapped these features to equivalent Metal functionality, moving the algorithm’s execution closer to metal (sic!).

Our conversion tool ultimately reached a level of sophistication to convert 100% of the original codebase.

Result

Our updated version of our client’s software demonstrated many-fold, in some cases over 300% speed-up over the original code on the target smaller M1 and larger M2 GPUs.

As an added benefit, our solution ended up being more than just a one-time port, due to its internal structure it retained the single source approach of the original project, resulting in highly maintainable, future-proofed code with two backends supported from now on.

Related Posts

Case-Study: Text-to-Speech

12/03/2024

Case-Study: NLP Applications for Stock Market Prediction

6/06/2023

Case-Study: Performance Modelling of AI Inference on GPUs

15/05/2023

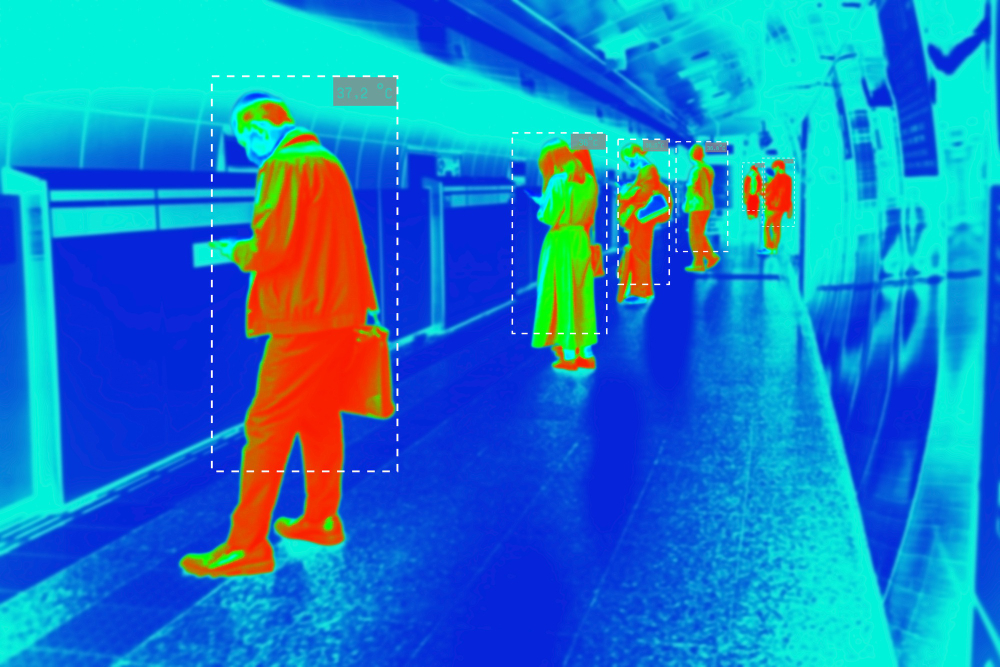

Case Study: Multi-Target Multi-Camera Tracking

10/02/2023

Case-Study: Action Recognition

11/01/2023

Consulting: AI for Personal Training

2/11/2022

Case-Study: A Generative Approach to Anomaly Detection

22/05/2022