Large-Scale SKU Product Recognition

For a multinational startup building autonomous shopping cart technology in North American grocery retail, we designed a hierarchical product recognition system covering several thousand SKU classes, including roughly a thousand groups of visually similar products. Manual identification had previously absorbed a significant share of catalogue operations.

The Challenge

The client needed a vision model that could reliably identify products from a retail catalogue of several thousand SKU classes in real time, from camera images captured inside a moving shopping cart. Standard classification architectures perform well at modest class counts but degrade at scale — and the dataset compounded that problem in two additional ways.

Scale: thousands of classes.

A catalogue of several thousand SKUs pushes standard CNN and ViT architectures into a regime where accuracy degrades — both because the model must separate increasingly fine-grained classes and because few-shot learners deteriorate when the candidate class set grows beyond a few hundred options.

Most classes have too few labelled samples.

Standard fine-tuning assumes sufficient labelled samples per class. A large share of the catalogue sat below that threshold — making straightforward supervised training unreliable for those classes regardless of architecture.

A third of the catalogue looks nearly identical across classes.

Roughly a third of the catalogue consisted of products that shared packaging design, colour, and layout — differentiated only by minor label text or sub-variant markings that may be occluded or unreadable at cart-camera distance and angle.

Project Timeline

From dataset audit to a two-stage hierarchical classifier operating across thousands of SKUs

Dataset Audit & Filtering

Audited the full catalogue for class distribution, sample counts, and visual similarity groupings. Filtered to a working subset with minimum viable sample counts per class to establish a reliable training and validation baseline.

Used a pretrained DINO vision transformer as a frozen encoder to produce dense image embeddings, with embeddings precomputed and cached to make training fast — no encoder fine-tuning required at this stage.

Embedding Pipeline (DINO)

Superclass Construction

Computed per-class prototype vectors from the embeddings, reduced dimensionality with UMAP, then applied agglomerative clustering to group classes into approximately 100 superclasses. Retail catalogues have no natural taxonomy — this step had to be derived from the data itself.

Trained a coarse-grained classifier on the ~100 superclasses. Validated separately to quantify the benefit of the hierarchical split before coupling the two stages end-to-end.

Coarse Model Training

Fine-Grained FSL Head & Evaluation

Trained a few-shot learning head conditioned on the coarse output, operating over a reduced candidate set per superclass. Evaluated end-to-end at both ~1k and ~2k class scales, and on the visually similar SKU subset, to characterise accuracy across the difficulty spectrum.

The Solution

We designed a hierarchical coarse-to-fine architecture that addresses scale, imbalance, and visual similarity as distinct sub-problems — rather than treating them as a single classification challenge that a larger model would eventually solve. Each component was chosen for what it specifically contributes, not for novelty.

Few-shot learners degrade once the candidate class set passes a few hundred options. A coarse classifier that narrows the candidates first is the necessary architectural split — and the reason the two stages together outperform either model running alone. This is consistent with our broader experience building production computer vision systems, where the right decomposition usually matters more than the choice of any single model.

Precomputing embeddings from a frozen DINO encoder once — rather than running the encoder on every training step — is the practical default when the encoder needs no fine-tuning. The system inherits the rich visual representations of a pretrained vision transformer without the data volume required to train an encoder from scratch. Training cycles drop substantially — most of the compute moves out of the inner loop.

Retail catalogues have no natural product taxonomy. The grouping has to be derived from the data itself. We computed per-class prototype vectors from DINO embeddings, reduced dimensionality with UMAP, and clustered with agglomerative clustering to construct the superclasses — an adaptation from reference architectures that typically assume a pre-existing hierarchy.

Technical Specifications

The Outcome

The hierarchical classifier reached above 95% end-to-end accuracy on the ~1,000-class evaluation set. At ~2,000 classes — a scale at which flat classifiers typically show significant degradation — the system still achieved above 83% end-to-end. On the visually similar SKU subset, accuracy came in above 87% exact match (above 94% at top-3), confirming that the coarse-to-fine split was doing meaningful work on the hardest part of the catalogue. The result reduced the share of catalogue operations requiring manual identification — the primary business metric the engagement was scoped around.

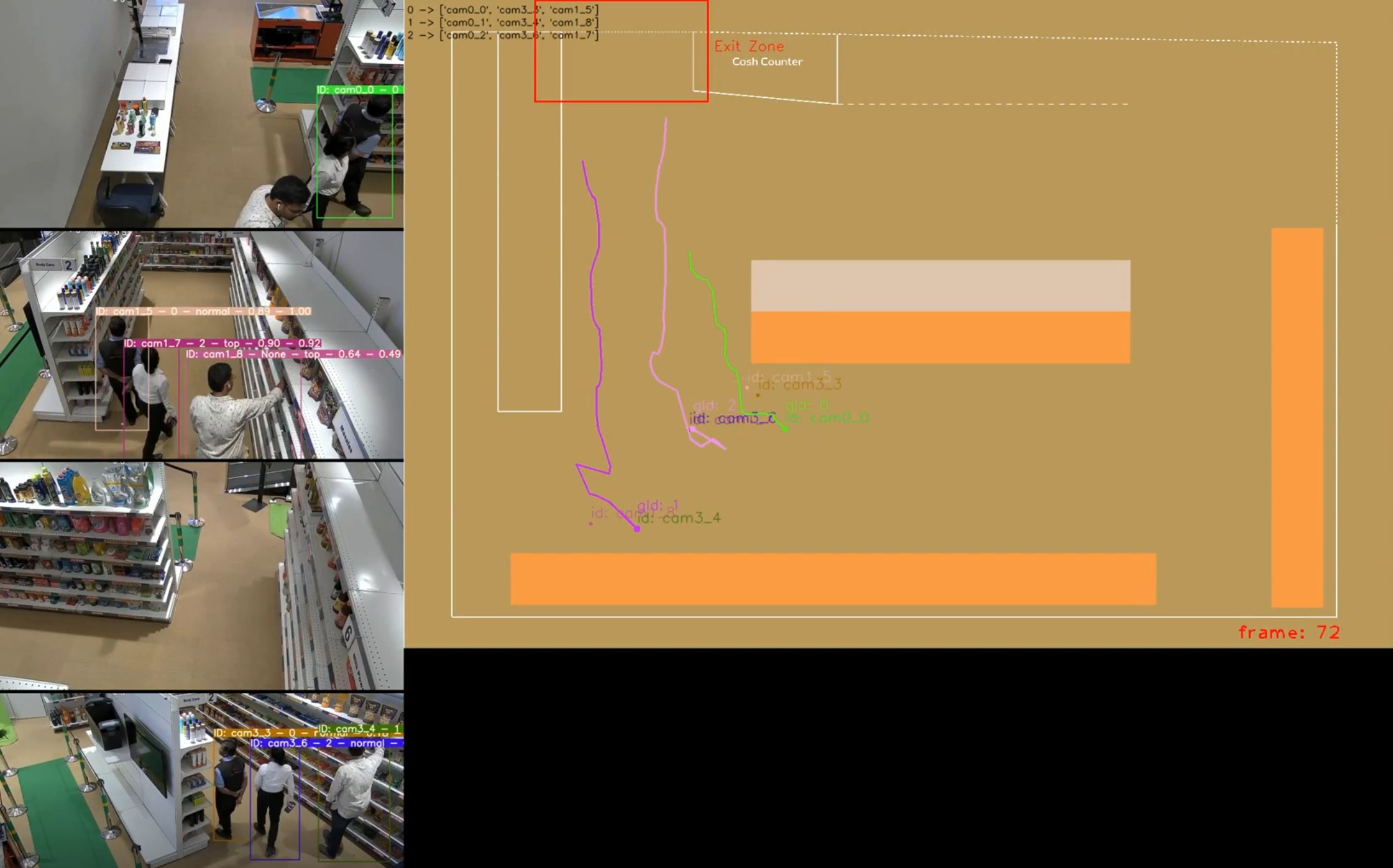

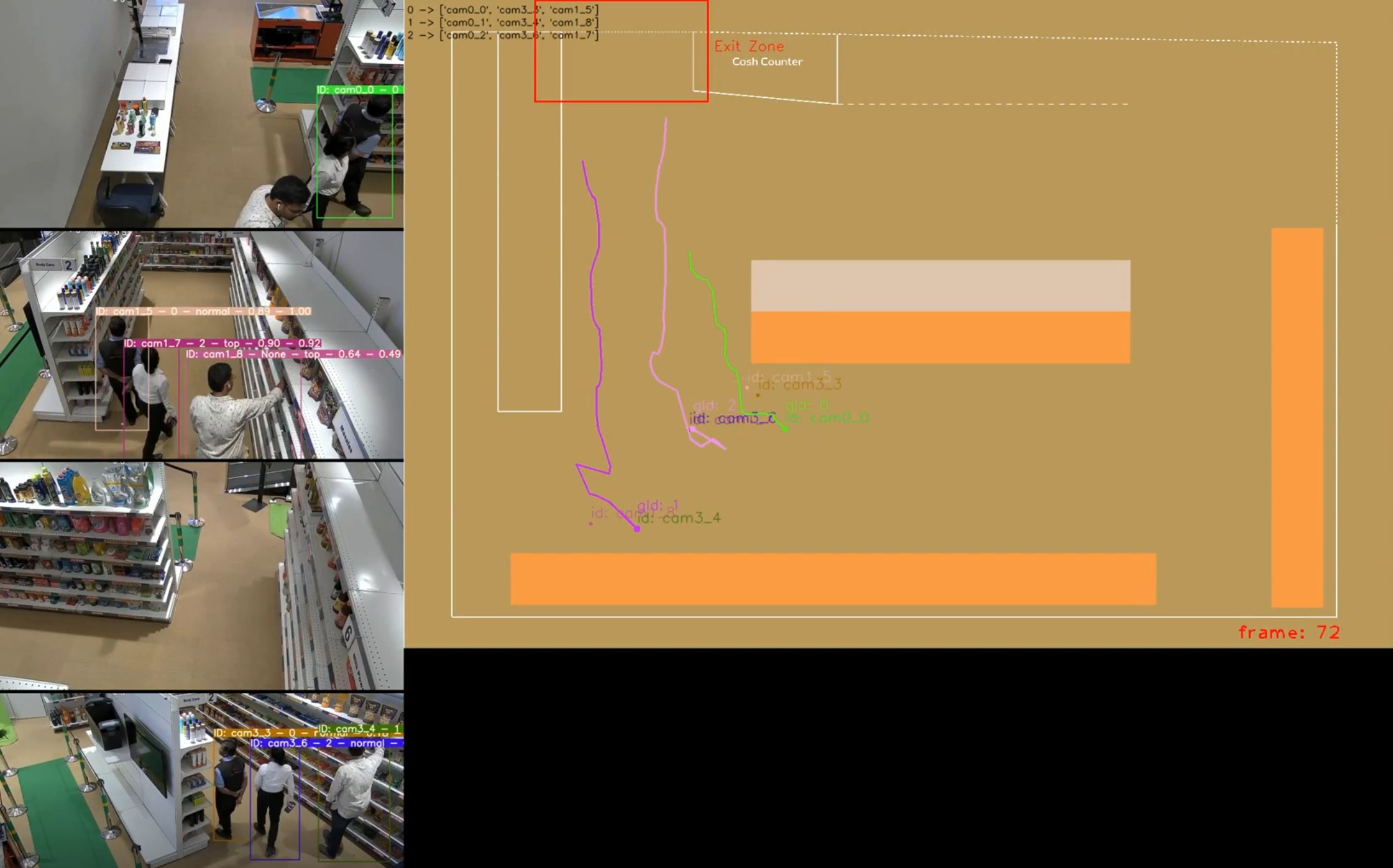

Two boundaries are worth naming. Pushing past 90% at the ~2,000-class scale likely requires another hierarchy layer rather than further tuning of the existing two stages, because the per-superclass candidate set has grown large enough to start dragging the few-shot head back into its degradation regime. And classes the model has never seen remain out of scope until they enter the training set through the labelling loop — accuracy on truly unseen classes is not what this architecture optimises for. This workstream was one component of a broader multi-year smart retail engagement that also included in-cart object tracking, multi-camera tracking, and security action recognition.

Key Achievements

Above 95% end-to-end accuracy at ~1,000 class scale on the filtered validation set

Above 83% end-to-end accuracy at ~2,000 class scale — where flat classifiers degrade significantly

Above 87% exact-match accuracy on the visually similar SKU subset (above 94% top-3)

Data-driven superclass construction via UMAP + agglomerative clustering — no external taxonomy required

Replaced a significant share of manual catalogue identification work — the primary business metric the client tracked

Building a Product Recognition System at Scale?

Large-catalogue product recognition is a structural problem more than a model-tuning problem. The architecture decisions — where to split the classifier, what to freeze, how to derive the taxonomy — tend to matter more than the choice of any single model.