Why does the framework matter more than the model?

An AI agent’s quality depends primarily on three things: the reasoning model that powers it, the tools it can access, and the orchestration framework that manages its execution flow. Most teams spend their evaluation effort on model selection (GPT-4 vs Claude vs Gemini vs Llama) and minimal effort on framework selection. This is backwards. The model provides the intelligence; the framework determines whether that intelligence can be reliably deployed. Industry experience consistently shows that enterprises adopting structured agent frameworks encounter significantly fewer production failures compared to those using custom orchestration built from raw API calls.

A well-chosen framework provides: structured tool invocation (the agent calls tools through typed interfaces, not through generated text that might be malformed), execution state management (the agent’s progress through a multi-step task is tracked and recoverable), error handling (tool failures and model errors are caught and handled rather than cascading), observability (every step is logged and traceable), and cost control (token usage is monitored and bounded). A poorly chosen framework — or no framework at all (raw API calls with custom orchestration) — requires building all of these capabilities from scratch.

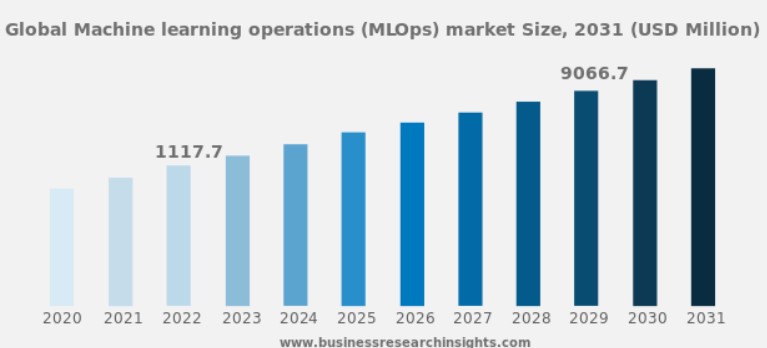

LangChain reports over 90,000 GitHub stars and 200+ integrations as of 2024, making it the most widely adopted agent framework. A 2024 survey by AI Engineer Foundation found that 45% of production agent deployments use LangChain/LangGraph, 20% use custom orchestration, 15% use AutoGen, and the remainder use other frameworks.

The major frameworks compared

LangChain / LangGraph (v0.3 / v0.2, as of January 2026)

LangChain is the most widely adopted AI agent framework, with the largest community and the broadest integration ecosystem. LangGraph extends LangChain with explicit graph-based workflow definitions — nodes represent processing steps, edges represent transitions, and conditional logic determines which edges are followed.

Strengths. Extensive integration catalogue (200+ tool integrations, vector stores, document loaders). LangGraph’s explicit graph definition makes complex workflows debuggable — the workflow structure is visible and testable. LangSmith provides production-grade observability: execution traces, latency monitoring, cost tracking, and evaluation infrastructure.

Weaknesses. The abstraction layer adds complexity — debugging issues that span multiple LangChain abstractions requires understanding the framework’s internal dispatch logic. The framework evolves rapidly, and breaking changes between versions have been a recurring issue for production deployments. The learning curve for LangGraph’s graph definition syntax is steeper than for simpler sequential frameworks.

Best for: Complex multi-step workflows with conditional branching, production deployments that require observability and evaluation infrastructure, teams that need broad tool integration coverage.

AutoGen (v0.4, as of December 2025)

Microsoft’s AutoGen framework is designed around multi-agent conversation: multiple agents with different roles communicate through structured messages to accomplish tasks collaboratively.

Strengths. The multi-agent conversation model is intuitive for tasks that benefit from role separation (coder + reviewer, researcher + writer, planner + executor). The agent communication protocol is explicit and auditable. Integration with Azure AI services and OpenAI APIs is tight.

Weaknesses. The conversational coordination model can be verbose — agents communicate through full messages rather than structured data, consuming tokens and increasing latency. The coordination failure modes (unbounded loops, hallucinated handoffs, context loss) must be managed through custom conversation management logic that the framework does not fully address. Production observability and deployment tooling are less mature than LangSmith.

Best for: Multi-agent architectures where role-based collaboration is the primary pattern, teams already invested in the Microsoft/Azure ecosystem.

CrewAI (v0.80, as of February 2026)

CrewAI provides a role-based agent framework focused on simplicity: define agents with roles and goals, define tasks with descriptions and expected outputs, and let the framework manage the agent coordination.

Strengths. The abstraction level is high. As of 2024, CrewAI has over 20,000 GitHub stars and reports adoption by over 5,000 development teams (CrewAI documentation) — defining an agent crew requires minimal boilerplate. The role-based metaphor (agents are “crew members” with specific responsibilities) is accessible to non-ML engineers. The framework handles task delegation and result aggregation with reasonable defaults.

Weaknesses. The high abstraction level limits control over execution details. When the default coordination behaviour does not match the use case, the customisation options are constrained. The framework is newer and has a smaller community and integration ecosystem than LangChain. Production observability is limited compared to LangSmith.

Best for: Rapid prototyping of multi-agent systems, teams that prioritise development speed over fine-grained control, use cases that fit the role-based delegation pattern naturally.

Custom orchestration (no framework)

Building agent orchestration directly on the model APIs (OpenAI Assistants, Anthropic Tool Use, Gemini Function Calling) without an intermediate framework.

Strengths. Maximum control over every aspect of the agent’s execution. No framework abstraction layer to debug through. No dependency on a third-party framework’s release cycle or design decisions.

Weaknesses. Every production requirement — state management, error handling, observability, cost control, tool type safety — must be built from scratch. The development effort is 3–5× higher than using a framework for the orchestration layer. Maintenance burden increases as the custom orchestration code grows.

Best for: Simple single-tool agents where framework overhead is not justified, organisations with strong infrastructure engineering teams that prefer full control, and cases where existing frameworks do not support the specific orchestration pattern required.

The evaluation criteria

We recommend evaluating frameworks against these production-readiness criteria:

Observability. Can you trace every step of the agent’s execution, including tool inputs/outputs, model prompts/responses, and branching decisions? Can you replay a failed execution to diagnose the root cause? LangGraph + LangSmith currently provides the strongest observability; custom orchestration provides whatever you build.

Error recovery. When a tool call fails (API timeout, malformed response, permission error), does the framework retry, fall back, or crash? When the model produces malformed tool call parameters, does the framework catch and handle the error? LangGraph provides configurable retry and fallback policies. AutoGen and CrewAI have less mature error handling.

Cost control. Can you monitor and limit token usage per agent, per task, and per session? Can you set circuit breakers that terminate agents that enter unbounded loops? This capability is critical for production — a runaway agent can consume thousands of dollars in API costs in minutes.

State persistence. Can the agent’s execution state be persisted and resumed? For long-running tasks (multi-hour research, multi-day workflows), the agent must be able to checkpoint its progress and resume after interruptions. LangGraph provides state persistence through checkpoint storage backends. CrewAI and AutoGen have limited persistence support.

Testing. Can you write unit tests for individual agent steps and integration tests for complete workflows? Can you evaluate agent performance on a test suite of inputs and expected outputs? LangSmith provides evaluation infrastructure; other frameworks require custom test harnesses.

Our framework recommendation approach

We do not recommend a single framework universally. The recommendation depends on the use case complexity, the team’s engineering maturity, and the production requirements:

- Simple single-agent with tools: Custom orchestration or LangChain (without LangGraph)

- Complex multi-step workflow with branching: LangGraph

- Multi-agent collaboration: AutoGen or LangGraph (LangGraph can implement multi-agent patterns through subgraphs)

- Rapid multi-agent prototyping: CrewAI

- Production deployment with full observability: LangGraph + LangSmith

Framework evaluation rubric

Framework versions, features, and community size change rapidly. Use these criteria to evaluate any agentic framework — current or future — independent of a specific release:

- Observability depth. Does the framework provide execution traces with full tool inputs/outputs, model prompts/completions, and branching decisions — or only high-level step summaries? Can you replay a failed run from the trace alone?

- Error recovery granularity. Can you define per-step retry policies, fallback paths, and circuit breakers? Does the framework distinguish between transient failures (API timeouts) and permanent failures (invalid tool parameters)?

- State persistence and resumability. Can execution state be checkpointed to durable storage and resumed after process restarts, infrastructure failures, or deliberate pauses in long-running workflows?

- Cost governance. Does the framework expose token usage per step, per agent, and per session? Can you set hard budget limits that terminate execution before costs exceed a threshold?

- Testing surface area. Can individual agent steps be unit-tested in isolation with mocked tool responses? Can complete workflows be integration-tested with deterministic model outputs?

- Upgrade path stability. Does the framework follow semantic versioning? Are breaking changes documented with migration guides? Is the API surface stable enough for production commitments?

Framework selection based on checklist features rather than production-grade evaluation is a recurring source of rework — a GenAI Feasibility Assessment includes framework evaluation and architecture design against your specific use case requirements.