Introduction

In 1912, one of the biggest disasters took place. Since then, the RMS Titanic has been lying on the bottom of the Atlantic Ocean. However, in the same year, one of the brightest and most influential minds was born.

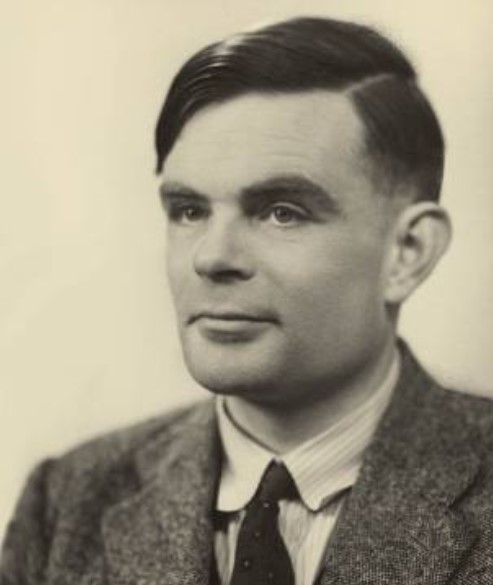

The word is for Alan Turing, the father of Artificial Intelligence (AI) and computer science as we know it today. Despite his short life, Alan Turing has made significant contributions, not just to science but to the way we think.

The Universal Turing Machine (UTM) is the best example of this concept. According to Turing, a single machine can perform any task given the right instructions (Turing, 1937). This, of course, raised another question: ‘Can machines think?’ This was the main content of his paper ‘Computing Machinery and Intelligence’ (Turing, 1950). The answer was given by the Turing test, in which an evaluator interacts with both a machine and a human. If the evaluator cannot tell the difference with conviction, the machine has passed the test.

The relevance of Turing’s work extends to technologies including Computer Vision (CV), Generative AI, GPU acceleration, and IoT edge computing, technologies that rely on the computers understanding and processing the data that are fed into them or even generating new data based on a series of ‘thoughts’. The relevance of his work is expanded to the fields of Augmented Reality (AR), Virtual Reality (VR), Mixed Reality (MR), and Extended Reality (XR), all of them being technologies that incorporate AI to enhance user experiences in an interactive way. Let’s take a look at the life and work of this extraordinary individual with a complicated mind.

Alan Turing’s Early Life and Academic Foundations

First years

Born in the middle-upper class, although Alan Turing’s parents worked as civil servants in India, Turing was raised in London by relatives. From a young age, Turing displayed signs of upper intellect. He attended Sherborne School in Dorset, where he excelled in mathematics, his love of which led to his enrolment at King’s College in 1931. He graduated with the highest honours in 1934 and became a fellow of King’s College at age 22 (Britannica, 2024).

Academia

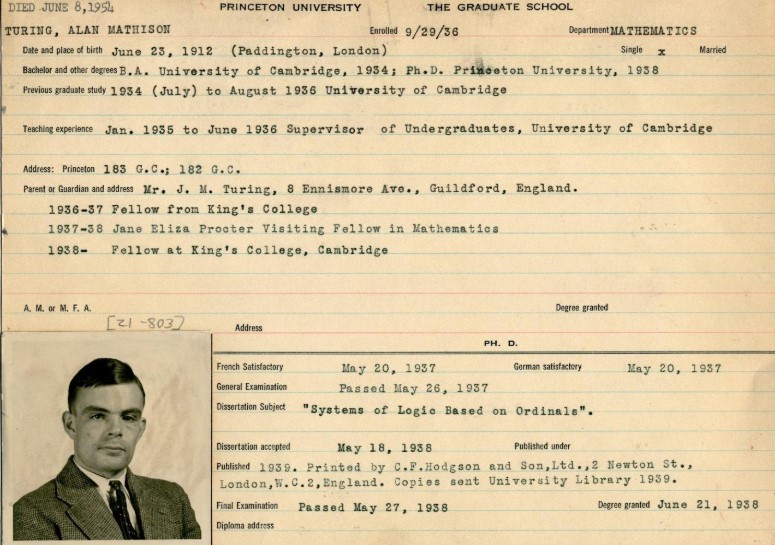

In 1936, Turing reached a pivotal point in his career. From then until 1938, he studied at Princeton University in the United States as a graduate student, where he studied under the mentorship of mathematician Alonzo Church. The two academics often conversed about foundational mathematical concepts and, as a result, Turing’s dissertation ‘Systems of Logic Based on Ordinals’ under Church’s supervision introduced innovative ideas that reformed and expanded ‘what can be computed’, but most importantly ‘how’. Apart from his mentor, Turing interacted with other influential figures such as von Neuman and Gödel. This was a result of the effort Princeton University made to establish itself as a world-class centre for mathematics (Princeton, n.d.). The established environment encouraged Turing to solidify his thoughts regarding computability, leading to the formulation of the UTM. Despite the machine being abstract, the logical principles described there are relevant to this day!

The Universal Turing Machine

The paper ‘On Computable Numbers with an Application to the Entscheidungsproblem’, published in 1936, was probably one of the hallmarks of Alan Turing’s academic work. It starts with a definition of ‘computable numbers’, which, in simple words, are all the numbers an algorithm can compute. In this step, a boundary is set between which numbers can and which cannot be computed mechanically. The UTM demonstrated that one single machine can perform any computation that can be expressed algorithmically, basically unifying all previous Turing machines in one setting, thus, the foundation of contemporary computer programming. An application of David Hilbert’s Entscheidungsproblem is then discussed. This paper examined the possibility of an algorithm that could determine which mathematical statements are true or false. Turing proved that such an algorithm cannot possibly exist and that some problems are unsolvable, proving that limitations in computation do exist in both mathematics and computer science. This proof has been called ‘Turing’s proof’ (History of Information, 2024).

All the ideas originating in this paper have laid the foundation for electronic computing. Apart from giving us an understanding of computation limits, it set the foundation for computer science, kicked off the development of task-specific programming languages, and set the scene for AI!

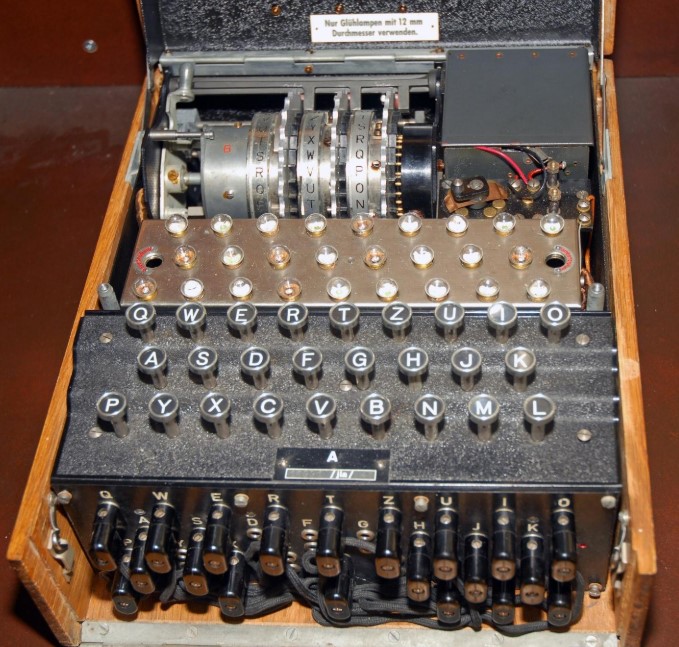

World War II and the Birth of Modern Computing

Of course, World War II was at the gates. The British government was running a top-secret code-breaking centre in Bletchley Park. Turing joined the effort in 1939, given the impossible task of breaking the Enigma machine, a complex device used by Nazis to encrypt communications. One might think, ‘ok, all it takes is to find a pattern’, yet the encryption changed daily, creating approximately 159 quintillion possible combinations! This alone made manual codebreaking impossible, so Turing came up with new methods, training both himself and others on his breakthroughs as they evolved (Imperial War Museum, n.d.). To make the codebreaking process more efficient, Turing developed the Bombe machine, an electromechanical device that automated the decryption of Enigma. It worked by simulating multiple Enigmas simultaneously and testing various settings, thus reducing the time needed to break codes from days to minutes. In 1942, Turing travelled back to the States to share his knowledge and advise the US military intelligence to use it (Britannica, 2024).

His work during wartime revolutionised modern computing. Turing, with the development of the Bombe and the principles behind it, contributed to the development of early postwar computers. The techniques he used during his time in Bletchley Park laid the groundwork for modern encryption methods and showcased the need for secure communications, and his ideas that a machine could learn from data became the pillars of machine learning and AI.

Read more: Cinematic VFX AI: Enhancing Filmmaking and Post-Production

The Turing Test

The Earliest Concept of AI

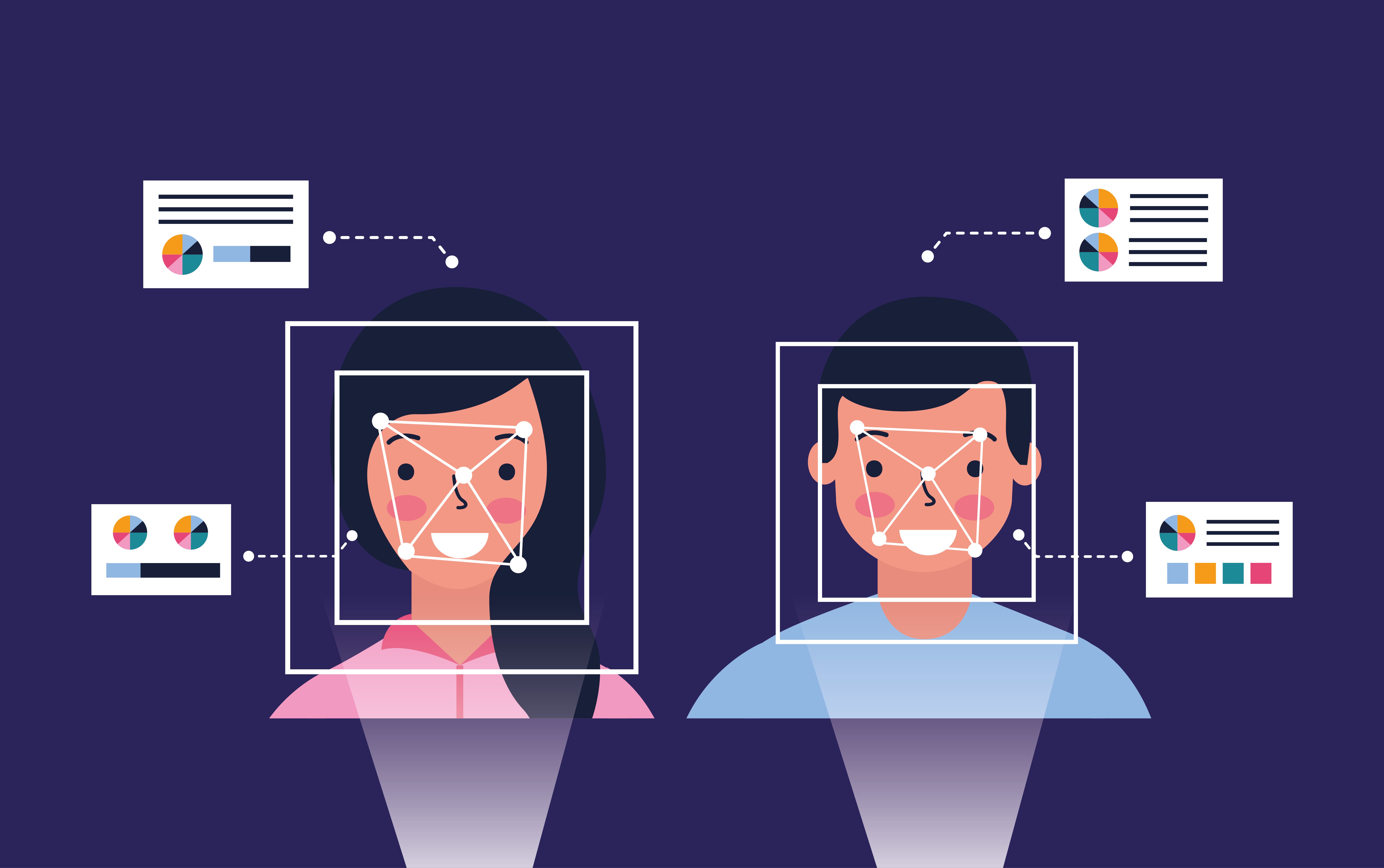

Earlier, we mentioned the Turing Test. Let us go back to it to understand what it is like. First, we need to assign roles. On the one hand, we have a machine and, on the other hand, a human participant. Another human in the role of the ‘interrogator’ is conversing with both of them in turns, without knowing with which at any moment. If the interrogator cannot distinguish between the two in a casual conversation based on the responses he gets, the machine is said to have passed the test.

The Turing Test is probably the best way to measure machine intelligence in the area of Conversational AI, yet there are limitations. The machine’s focus is human imitation, not understanding or developing a consciousness. This raises the following question: How smart can a machine actually be, and can it think on its own? From our point of view, it depends on how much data it is able to process, yet Alan Turing has already established that there is indeed a limit on that. Yet, the Turing Test is a great example of similar machine learning-based applications that we use today. How do you think text auto-correction works?! And don’t forget that it was developed in the 1950s (Coursera, 2024)!

Machine Against Humanity

Over the years, many people have questioned whether machines should be as capable as they are. Some people call them conspiracists; others call them just cautious. We are not here to judge, yet there are certain elements that must be taken into account with AI. On the one hand, certain ethical issues have been raised by different scholars on whether machines indeed have the ability to actually think. On the other hand, and this is where it gets interesting, it has been implied that, in order for a machine to pass the Turing Test, it needs to be as human as possible. One of the characteristics of humans is the disadvantage of fatigue, which causes mistakes to occur. Could a machine deliberately introduce mistakes in its mimicking to trick the ‘interrogator’? Is that ethical, and could this actually imply true intelligence?

Read more: Human and Machine: Working Together in a New Era of AI-Powered Robotics

Applications in Modern Technologies

Applications where we can find elements of the Turing Test are all around us. CV, for example, is based on the processing of visual data, which first needs to be translated into numeric data and then processed. Keep this in mind the next time you use Google Lens. Other examples include AI consultants like ChatGPT for practically any task, perplexity.ai for academia, and DALL-E for image generation using prompts. Apart from these, there are also commercial applications in different industries, such as generative AI in insurance for fraud detection, AI in manufacturing, and quality control in the automobile industry. It is hard to find a company nowadays without some kind of AI-embedded algorithm in one of their products. You can find out more in our AI Assistants article here!

We can also find applications in vehicles, and not just in autonomous ones. Some cars are equipped with cameras all around to generate a bird’s eye view of the car and its surroundings on the infotainment system while parking, providing a more fun and creative interaction. Creativity doesn’t end there, though. XR is a great way to enhance our visual experience in different applications. In 2016, a new release entered the mobile gaming universe, which is no other than Pokémon Go. Using AR, players would hunt for Pokémon in the real world using the cameras and screens of their phones. VR gaming has been re-established with commercial products, such as the ones offered by Oculus, and Apple Vision Pro offers the possibility of interaction with an AR environment, aka MR!

Summing Up

The idea that a single person could have achieved so much in such a short period of time is really outstanding. During his 41 years of life, we dare say that Alan Turing achieved more than others would have during 2 lifetimes. He introduced new concepts, saved millions of lives during WWII and set the foundation for the Artificial Intelligence we experience today. Have machines been perfected? In our opinion, there is no such thing as ‘perfection’. Yet, it is safe to say that they have gone a long way and that the best is yet to come. After all, consider how many of the conveniences and applications we have today were unimaginable two decades ago!

What we offer

At TechnoLynx, we like to think of ourselves as practical implementers of Turing’s work by offering AI solutions custom-tailored to every company’s needs. We design our services on demand for each task from scratch, and that is our key to successfully delivering high-level custom software engineering services while ensuring human-machine interaction safety. Our team specialises in custom software development, managing, and analysing large amounts of data while at the same time addressing ethical considerations.

We are able to empower any given field and industry with our technological expertise using innovative AI-driven algorithms, including Machine Learning consulting and MLOps consulting, because we understand how beneficial AI can be for any business, increasing efficiency while reducing cost. The always-changing AI landscape is a constant challenge, and we are made to be challenged. Just contact us, let us do our stuff, and observe your project reach the sky!

Continue reading: Artificial General Intelligence (AGI) and the Human Body

List of References

-

Britannica (2024) – Alan Turing - Biography, Facts, Computer, Machine, Education, & Death (Accessed: 15 December 2024).

-

Britannica (2024) - Bombe - Code Breaking, History, Design, & Facts (Accessed: 15 December 2024).

-

Coursera (2024) – What Is the Turing Test? Definition, Examples, and More (Accessed: 24 December 2024).

-

History of Information (2024) – Alan Turing Publishes ‘On Computable Numbers,’ Describing What Came to be Called the ‘Turing Machine’ (Accessed: 15 December 2024).

-

Imperial War Museums (n.d.). How Alan Turing Cracked The Enigma Code (Accessed: 15 December 2024).

-

Princeton (n.d.) – Oral History Project (Accessed: 15 December 2024).

-

Princeton (2014) – Alan Turing’s Princeton University File Available Online (Accessed: 24 December 2024).

-

The National Museum of Computing (n.d.) – The Enigma Machine (Accessed: 24 December 2024).

-

The Turing Digital Archive (n.d.) – About Alan Turing (Accessed: 24 December 2024).

-

Turing, A.M. (1937) ‘On Computable Numbers, with an Application to the Entscheidungsproblem’, Proceedings of the London Mathematical Society, s2-42(1), pp. 230–265.

-

Turing, A.M. (1950) ‘I.—COMPUTING MACHINERY AND INTELLIGENCE’, Mind, LIX(236), pp. 433–460.

-

Watercutter, A. (n.d.) ‘How Designers Recreated Alan Turing’s Code-Breaking Computer for Imitation Game’, Wired (Accessed: 24 December 2024).