Introduction

Computer vision enables computers to process visual information from digital images or video. Many real-world systems run vision models that perform object detection, optical character recognition (OCR), and simple classification. Yet high accuracy often comes with heavy compute cost. Businesses need models that are efficient, fast, and effective.

This article reviews the best lightweight models. These models optimise deep learning models without steep resource demands. They help deliver real-time performance in applications such as inventory management, autonomous vehicles, and mobile apps.

Why Lightweight Models Matter

Traditional convolutional neural network (CNN) architectures such as VGG or ResNet bring high accuracy. But they require beefy compute. That makes deployment hard on edge devices or in real-time. Lightweight vision models deliver near state-of-the‑art results on images and videos with much less compute.

Models like MobileNet, SqueezeNet, and TinyYOLO use fewer parameters. They still allow object detection, image processing, and basic classification tasks. They process visual inputs rapidly. This makes them great for real-world use when latency, power, or cost matter.

Read more: Fundamentals of Computer Vision: A Beginner’s Guide

Top Lightweight Models Overview

MobileNet V2 / V3 uses depthwise separable convolutions. This cuts compute without sacrificing too much accuracy. Many applications use it for classification or recognition tasks in low-power devices.

SqueezeNet achieves similar results with very few parameters. It uses “fire modules” to squeeze feature maps before expanding them. It works well for image-only tasks.

For object detection, TinyYOLOv4 and YOLOv5 Nano provide quick bounding box detection. They work well on digital images and videos with little delay. They give up some accuracy compared to full-size SSD or Faster R-CNN. However, they still identify important real-world objects.

How Computer Vision Works with Lightweight Models

Most of these models rely on efficient image processing pipelines. A digital image passes through layers of convolution combined with activation functions and pooling. The model extracts features, then predicts a class label or box coordinates.

Using a lighter architecture means fewer layers or smaller channel widths. Models process fewer visual inputs but still retain key patterns. That makes them swift in deployment. They work on consumer devices and fit well into computer vision systems deployed in the field.

Read more: Computer Vision and Image Understanding

Applications in Inventory and OCR

Inventory management often uses images to track items on shelves. Lightweight vision models can classify products and count stock in real time. Add OCR to scan product codes or price tags. A mobile device or camera-based system may pair image or video recognition with OCR.

Computer vision technology based on MobileNet or SqueezeNet enables stock control apps, checkout systems, and product scanning tools. These work without constant server access. This design speeds up performance and reduces cost.

As the demand for small and efficient deep learning models increases, developers are working on custom methods. They are focusing on pruning and quantisation techniques.

Pruning removes redundant connections in convolutional neural networks (CNNs), helping reduce model size while maintaining accuracy. Quantisation, on the other hand, reduces precision from 32-bit floats to 16-bit or even 8-bit integers. These techniques allow models to run on edge devices without high-end computing hardware.

In mobile applications, reducing inference time is critical. Lightweight vision models must respond in milliseconds. Object detection tasks in apps like barcode readers or personal health monitors cannot afford latency.

Engineers can make neural networks work better in quick and low-resource situations. They do this by keeping the model parameters small and using data specific to the task.

When working with real-time video, performance challenges intensify. Models must maintain frame-by-frame tracking accuracy without buffering or lags. This is essential in cases like live driver assistance systems or quality checks on fast-moving production lines. Efficient models like YOLO Nano and MobileNetV3 balance low memory use with reliable tracking over several frames.

Another key advancement lies in neural architecture search (NAS). Rather than manually designing a model, NAS automates architecture building based on a data set and resource constraint. The output often results in novel structures, tailored specifically for edge applications or microcontrollers. These custom-built models outperform hand-crafted designs in many narrow tasks like gesture recognition or industrial inspections.

In addition to embedded use cases, lightweight models serve broader roles in content platforms. Social media filters, augmented reality (AR) effects, and basic OCR tasks all benefit from efficient pipelines. Many apps use computer vision systems on the device to protect privacy. Sending every frame to a cloud server is often impractical or against policy.

Edge devices also play a role in smart agriculture. Drones equipped with lightweight vision models can scan large fields in real time. These models identify weeds, estimate crop health, or track irrigation.

With no internet connection in remote areas, the model must function independently. Efficiency is not optional—it defines system viability.

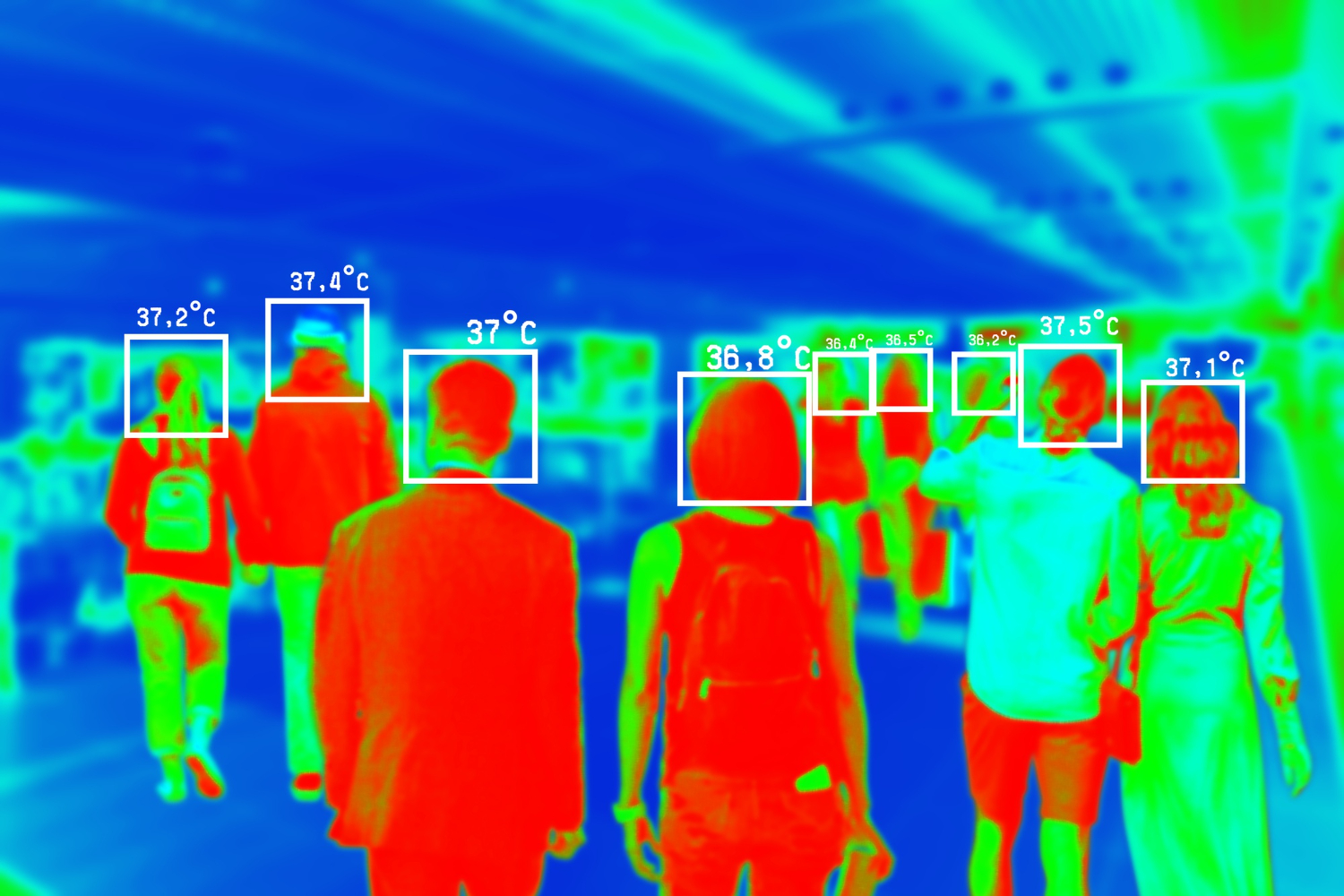

In public transport systems, security monitoring with onboard vision models helps detect unattended luggage or unusual behaviour. Here, low-latency processing is necessary for alerts to trigger within seconds. Lightweight vision models lessen the load on local GPUs or CPUs. These processors often share resources with many systems in the vehicle.

Healthcare wearables use these models for image classification in vital checks. Detecting skin lesions, measuring patient movement, or analysing respiratory patterns from visual input now happens directly on-device. By reducing reliance on a cloud platform, these solutions provide faster feedback and reduce privacy risks.

One overlooked area is document automation in finance or insurance. OCR models that convert scanned forms into structured data benefit from small-scale computer vision models. These models are faster and cheaper to run and offer enough accuracy for most structured templates. This suits business process automation in firms with limited IT budgets.

Lastly, urban management systems also benefit from lightweight visual technologies. Parking enforcement, pedestrian flow tracking, and recycling bin monitoring use embedded cameras with built-in vision capabilities. These models help municipalities gain real-time visibility into public spaces without installing expensive infrastructure or relying on centralised servers.

Read more: Real-World Applications of Computer Vision

Autonomous Vehicle Use Cases

Autonomous vehicles require vision models to detect traffic signs, lanes, hazards, and pedestrians in real time. Fully featured models may strain the in‑car hardware. Lightweight models fill the gap.

TinyYOLO or smaller-efficient CNNs can tag objects in live video at 30 fps on edge GPUs. This keeps latency low. It helps enable object detection while maintaining energy efficiency. These models support real-time feedback to driving systems.

Trade-Offs and Accuracy

Going lightweight means fewer parameters and less compute. That restricts model capacity.

Accuracy tends to fall compared to larger models. Fine-tuning on your training data helps recover some of that loss. For example, training MobileNet V3 on your inventory images improves its precision.

Evaluate models on your use case. For OCR, MobileNet‑based OCR models may meet accuracy goals. For object tracking or detection, test TinyYOLO variants on your video stream. If accuracy drops too far, you may need cascaded systems or hybrid models combining lightweight and heavier networks.

Implementation Tips

Use transfer learning to train lightweight vision models. Start from a pretrained model, then fine-tune on your data set. Keep input resolution manageable to reduce compute. Use techniques like quantisation or pruning to shrink size further.

Ensure real-world compatibility. Use efficient libraries that support neural network inference on edge devices. Frame batching and pipeline design help handle images or video frames quickly. Add early stopping to prevent overfitting when training on small data sets.

Read more: Deep Learning vs. Traditional Computer Vision Methods

Benchmarks and Performance

On standard image datasets, MobileNet V3 delivers ~75% top‑1 accuracy on ImageNet with ~5M parameters. That is comparable to a full‑size ResNet‑18 but with ~8x fewer parameters.

TinyYOLOv4 models can run at ~20‑30 fps on an edge GPU while detecting people, cars, and traffic signs. This latency suits real-time screen‑based tasks such as vehicle systems or surveillance.

In a retailer test, SqueezeNet used for shelf scanning processed images in milliseconds. The latency allowed instant stock updates. Accuracy reached ~85% for recognised items, enough for many use cases.

Integration with Systems

Lightweight models fit well into mobile and edge platforms. They pair with computer vision systems and image‑based pipelines. Use them for bounding box detection, simple segmentation, or OCR.

They plug into image processing backends to process visual information quickly. This drives efficient inventory checks, real-time detection, and automated analysis.

Security and Privacy

Processing images locally reduces data transmission. That helps with security and privacy.

Systems handle digital images on‑device. They do not send visual inputs over networks. This meets stricter privacy demands and eases data compliance.

Read more: Core Computer Vision Algorithms and Their Uses

Future Trends

Further model compression techniques like pruning, quantisation, and architecture search will push performance higher. New lightweight convolution methods and transformer hybrids may emerge. But current genera tion models already enable robust vision works on constrained devices.

How TechnoLynx Can Help

TechnoLynx specialises in applying lightweight vision models to real world applications. We help clients choose the right model for object detection, OCR, and image classification. We adapt architecture such as MobileNet or TinyYOLO to work on your data set and hardware.

We integrate models in systems including inventory management tools, mobile scan apps, and vehicle vision platforms. Our service includes training machine learning components and tuning them for accuracy and speed.

We support local deployment on edge CPUs, GPUs, and mobile platforms. We optimise performance while keeping costs low. We ensure that vision works smoothly and privacy stays intact.

Partner with TechnoLynx to enable your devices to see and act on images and video efficiently.

Image credits: Freepik