When should you build an internal AI team vs hire consultants?

“Should we build an internal AI team or hire consultants?” is a question that assumes the answer is one or the other. In practice, the answer for most organisations is both — at different times, for different purposes, in a sequence that builds internal capability while delivering projects.

The useful question is: which capabilities should be internal (because they are ongoing, strategic, and core to the business) and which should be external (because they are project-specific, require specialisation the business does not have, or are needed faster than internal hiring can deliver)? The hiring timeline alone shapes this decision: Gartner’s 2024 workforce survey reports that senior ML engineers take 4–6 months to hire on average, with total first-year compensation of $250,000–$350,000 in major US markets. These figures are labour-market directional indicators, not fixed benchmarks — actual compensation varies significantly by geography, seniority definition, and equity structure.

When to build internally

Internal AI teams are justified when AI is a sustained competitive advantage — when the models, data pipelines, and ML operations are core to the business’s value proposition, not a supporting function.

Criteria for building internally:

Ongoing model development. If the business will continuously develop, retrain, and evolve AI models as a core business activity — a technology product company, a data-driven platform, a company whose competitive advantage depends on proprietary models — internal ML engineering capability is essential. The models are the product; outsourcing their development creates dependency on the vendor’s timeline, quality, and availability.

Proprietary data advantage. If the business has proprietary data that is a competitive asset — customer behaviour data, sensor data, domain-specific training data — the ML capability to exploit that data should be internal. Giving proprietary data to external consultants creates information exposure risk and does not build internal understanding of the data’s value.

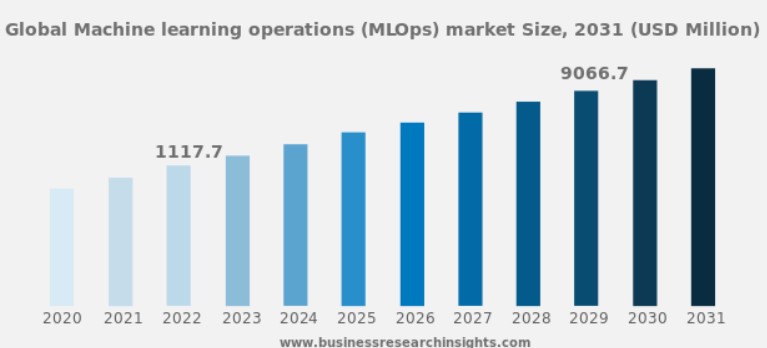

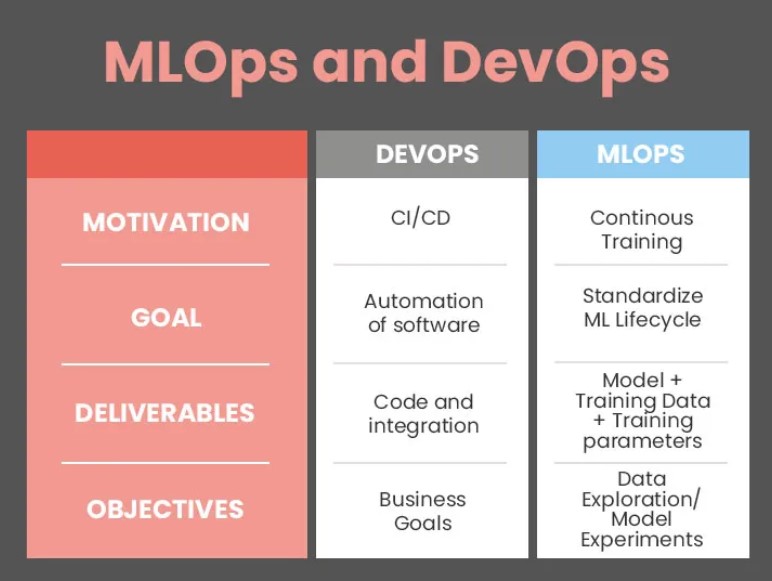

Scale of AI operations. If the business operates more than 3–5 production AI models with ongoing maintenance, monitoring, and retraining requirements, the operational overhead justifies dedicated internal MLOps capability. Below that scale, the operational work may not fill a full-time role, making external support more efficient.

The cost reality: A senior ML engineer is expensive. According to Glassdoor (2024), the average UK salary for a senior ML engineer is approximately £83,000, with total compensation (including benefits and equity) reaching £110,000–£160,000 at technology companies. Robert Half’s 2024 Technology Salary Guide reports that AI-specialised roles command a 20–30% premium over general software engineering roles, at £90,000–£150,000 annually (UK market). These are published salary-survey figures; actual compensation depends on location, company stage, and how “senior” is defined internally.

A senior ML engineering team (3–5 engineers + a team lead) costs £500,000–£900,000 annually in compensation alone, plus infrastructure, tools, and management overhead. The hiring timeline is 3–6 months per role in a competitive market. The ramp-up time — from hiring to productive contribution — is an additional 2–4 months per engineer.

When to hire consultants

External AI consultants are justified when the capability is needed faster than internal hiring can deliver, when the project requires specialisation that the business will not need on an ongoing basis, or when the business needs to learn before it builds.

Speed. A consulting team can start delivering within 2–4 weeks of engagement. An internal hire takes 3–6 months to recruit and 2–4 months to onboard. If the project timeline does not accommodate the hiring timeline, external consultants are the viable option.

Specialisation. Evaluating consultants for the right criteria matters because the value of external consultants is their depth in specific domains. A computer vision project requires CV engineers with production deployment experience. A GPU optimisation project requires engineers who understand CUDA profiling and kernel tuning. A GenAI project requires engineers who understand prompt engineering, RAG architecture, and LLM serving. These are different specialisations, and an organisation building its first AI team is unlikely to have all of them internally.

Learning before committing. An organisation that has never executed an AI project does not know what internal AI capability it needs. Hiring ML engineers before understanding the work they will do leads to mismatched hires — data scientists when the need was data engineers, researchers when the need was production engineers, generalists when the need was specialists.

Engaging consultants for the first 1–2 AI projects, with explicit knowledge transfer as a deliverable, teaches the organisation what AI work looks like in its specific context. The internal hiring decisions that follow are better informed: the organisation knows what skills it needs, what work volumes to expect, and what the ongoing operational burden will be.

The combined approach

The approach that works for most organisations follows a three-phase progression:

Phase 1: Consultants for first projects, with knowledge transfer. Engage AI consulting services for the initial projects. Structure the engagement so that internal team members participate in the project work — not as observers, but as active contributors. The consultants lead; the internal team learns. The structured engagement model includes knowledge transfer as a defined phase.

Phase 2: Internal hiring informed by project experience. After 1–2 completed projects, the organisation understands what AI work entails in its context. Internal hiring targets the specific skills that the ongoing work requires — which may be ML engineering, data engineering, MLOps, or a combination.

Phase 3: Internal team for core operations, consultants for peaks and specialisation. The internal team handles the ongoing model operations, monitoring, and incremental improvements. External consultants are engaged for peak capacity (a major new project that exceeds the internal team’s bandwidth) and deep specialisation (a specific domain — GPU optimisation, computer vision for a new modality, GenAI architecture — where the internal team does not have depth).

This approach builds internal capability progressively, reduces the risk of misinformed early hiring, and maintains access to specialised expertise that no single internal team can cover across all AI domains.

The decision matrix

| Factor | Build internally | Hire consultants |

|---|---|---|

| AI is a core business competency | ✓ | |

| >3 production AI models ongoing | ✓ | |

| Project needed in <3 months | ✓ | |

| Specialised domain expertise required | ✓ | |

| First 1–2 AI projects | ✓ | |

| Sustained competitive advantage from proprietary models | ✓ | |

| Temporary peak in project volume | ✓ | |

| Organisation learning what AI capability it needs | ✓ |

Planning-grade decision factors: build, hire, or hybrid

The following ranges are indicative, drawn from engagement patterns we have observed across mid-market and enterprise clients. They are starting points for discussion, not fixed thresholds — your organisation’s sector, geography, and risk appetite will shift the boundaries.

| Decision Factor | Build Internal | Hire Consultants | Hybrid |

|---|---|---|---|

| Annual AI project count | 5+ concurrent projects | 1–2 projects | 3–4 projects |

| Annual AI budget | >£800K (team + infra) | <£250K | £250K–£800K |

| Time to first delivery | 6–12 months acceptable | <3 months required | 3–6 months |

| Production models maintained | >5 models with ongoing retraining | 0–1 models | 2–5 models |

| Internal ML headcount today | ≥5 ML engineers on staff | 0–1 ML-skilled staff | 2–4 ML engineers |

| Domain specialisations needed | 1–2 (depth over breadth) | 3+ distinct domains per year | 1–2 core + occasional specialist needs |

| AI maturity stage | Scaling and optimising | Exploring or piloting | Operationalising first production systems |

How to read the table: match your organisation’s current position on each row. If most factors fall in one column, that is likely the right primary approach. A split across columns suggests a hybrid model — internal team for core operations, consultants for specialisation and peak capacity. Budget figures are approximate 2025–2026 UK ranges and will vary by region and seniority mix.

Where the matrix does not apply directly: We have seen organisations whose headcount and budget placed them firmly in the “build internal” column, but whose AI maturity was early-stage — meaning they did not yet know what skills to hire for. In those cases, a hybrid start (consultants for the first 1–2 projects, then informed internal hiring) produced better outcomes than immediate team-building. The matrix is a planning heuristic, not a substitute for assessing your organisation’s specific context.

The decision is not permanent. Organisations that start with consultants can transition to internal teams as their AI maturity grows, and the decision framework above applies equally well to reassessment as to initial evaluation. Organisations with internal teams can engage consultants when specialised expertise is needed or project volume exceeds internal capacity.