Readiness is the question before “what should we build?”

Most organisations approach AI adoption backwards. They start with use cases — “we want to build a chatbot,” “we need predictive maintenance,” “we should use GenAI for document processing” — and then discover that the prerequisites for executing those use cases are not in place. The data infrastructure cannot support model training. The team does not have ML engineering capability. There is no governance framework for AI decision-making. The use case evaluation assumed a level of organisational readiness that does not exist.

AI readiness assessment addresses this by evaluating the organisation’s capability to execute AI projects successfully — before committing to specific projects. The assessment identifies the gaps that would cause AI projects to fail and provides a roadmap for closing those gaps in the order that enables the most valuable projects first.

The gap between paper-readiness and execution-readiness: Organisations that score well on data quality audits and have ML-titled staff can still fail at AI execution. The pattern we observe most often: the data is clean in the warehouse but not accessible to training pipelines, the ML engineers have research experience but not production deployment experience, and the governance framework exists as policy but has never been tested against a live AI decision. The scorecard below is designed to surface these execution-readiness gaps, not just paper-readiness ones.

The three dimensions of AI readiness

Data infrastructure readiness

AI models consume data. The organisation’s data infrastructure determines whether data is available, accessible, and usable for AI workloads.

Data quality. Is the organisation’s data clean, consistent, and complete enough to train and operate AI models? Data quality issues — missing values, duplicates, inconsistent formats, stale records — degrade model performance proportionally to their severity. An organisation with 30% missing values in its key datasets is not ready for AI projects that depend on that data.

Data accessibility. Can the data be accessed programmatically by training and serving pipelines? Data locked in departmental silos, legacy systems without APIs, or third-party platforms with restrictive licensing is not accessible for AI workloads — regardless of its quality. The engineering effort to make data accessible (building extraction pipelines, negotiating data sharing agreements, modernising legacy systems) is often underestimated.

Data infrastructure. Does the organisation have the storage, compute, and pipeline infrastructure to support AI data workflows? Training data must be stored in formats and systems that support efficient retrieval (data lakes, feature stores, vector databases). Serving data must flow through pipelines that deliver it to models at production latency. If the organisation’s data infrastructure is designed for BI reporting and analytical queries, it may not support the throughput and latency requirements of AI workloads without modification.

Organisational capability readiness

AI projects require specific skills that the organisation may or may not have.

ML engineering. Can the organisation’s technical team build, train, evaluate, and deploy ML models? This requires skills in data preprocessing, model selection and training, evaluation methodology, and deployment infrastructure. If the organisation does not have ML engineering capability, the options are: hire it (expensive, slow), train existing engineers (moderate cost, slow), or engage consultants (moderate cost, fast, with knowledge transfer as part of the engagement).

Data engineering. Can the technical team build and maintain data pipelines that feed AI workloads? Data engineering is a different skillset from ML engineering — it focuses on data ingestion, transformation, quality assurance, and pipeline reliability rather than model development. Many organisations underinvest in data engineering relative to ML engineering, resulting in teams that can build models but cannot feed them with reliable data.

Product/business integration. Can the organisation translate model output into business action? An AI model that predicts customer churn has no value unless the prediction triggers a retention action — a call from account management, a discount offer, a service improvement. The integration between model output and business process requires product managers, business analysts, and operations teams who understand how to operationalise AI predictions.

Governance readiness

AI governance determines how the organisation manages the risks, responsibilities, and oversight of AI systems.

Decision authority. Who approves AI projects? Who owns the AI model’s decisions in production? If a fraud detection model incorrectly blocks a legitimate transaction, who is accountable? If a hiring algorithm produces biased recommendations, who is responsible? These accountability questions must have answers before AI systems are deployed, not after an incident forces the question.

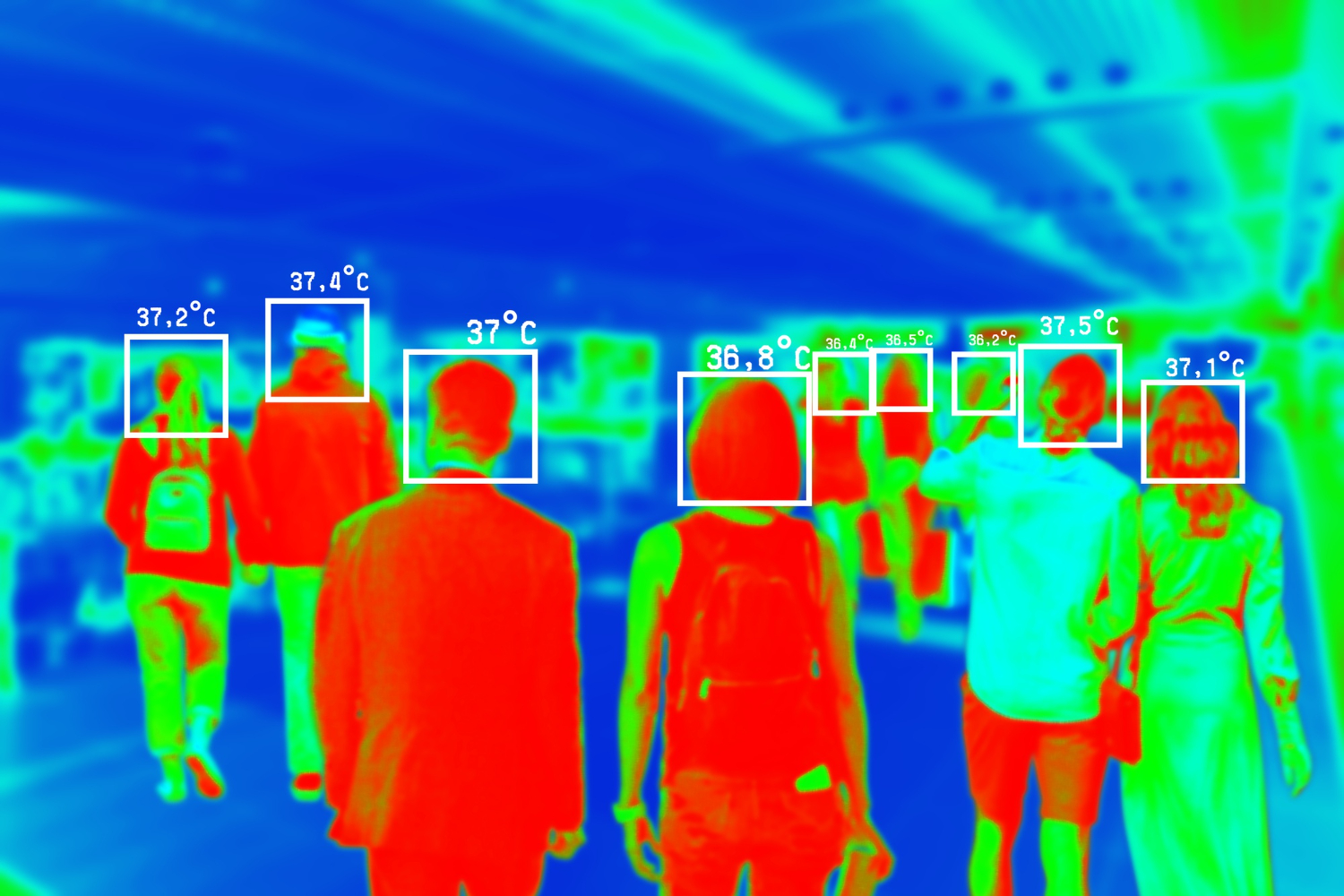

Risk management. What framework does the organisation use to assess and manage AI-specific risks — bias, fairness, security, privacy, and reliability? AI systems introduce risks that traditional IT risk frameworks do not address: model drift (the model degrades over time as data changes), adversarial inputs (users intentionally or accidentally provide inputs that cause the model to fail), and emergent behaviour (the model produces outputs that were not anticipated during development).

Compliance. What regulatory requirements apply to the organisation’s AI use? The EU AI Act, sector-specific regulations (FDA for healthcare, PRA/FCA for financial services), and data protection regulations (GDPR, CCPA) impose specific requirements on AI systems — transparency, explainability, data handling, and bias assessment. Organisations that deploy AI systems without understanding the regulatory requirements risk non-compliance and the associated penalties.

The readiness assessment process

A structured AI readiness assessment evaluates all three dimensions:

-

Data infrastructure audit. Examine the organisation’s key datasets against the data requirements of the proposed AI use cases. Score data quality, accessibility, and infrastructure capability.

-

Capability mapping. Assess the organisation’s technical team against the skill requirements for the proposed AI projects. Identify gaps and map them to hiring, training, or consulting strategies.

-

Governance review. Evaluate the organisation’s existing governance frameworks and identify gaps relative to AI-specific governance requirements. Map the gaps to the regulatory requirements that apply to the organisation’s sector and geography.

-

Gap-to-action mapping. For each identified gap, define the specific action required to close it, the estimated effort, and the priority (which gaps must be closed first because they are prerequisites for the most valuable AI projects).

-

Roadmap. A phased plan that closes the readiness gaps in the order that enables AI project execution. The roadmap sequences the readiness investments to unlock the highest-value AI projects first — so the organisation can begin executing AI projects while continuing to build readiness for more complex future projects.

AI readiness scorecard

The dimensions below align with the readiness frameworks used by Gartner (AI Maturity Model, 2023), McKinsey (AI readiness diagnostic), and Google Cloud’s AI Adoption Framework — adapted here for practical self-assessment rather than vendor-specific tooling. Score each dimension 1–3 and multiply by the weight to get a weighted readiness score.

| Dimension | Score 1 — Not Ready | Score 2 — Partial | Score 3 — Ready | Weight |

|---|---|---|---|---|

| Data quality | >20% missing values; no quality monitoring; inconsistent formats | <10% missing; monitoring exists but manual; partial standardisation | <2% missing; automated monitoring and remediation; consistent formats | ×2 |

| Data accessibility | Data in silos or legacy systems without APIs; no data lake; batch-only | APIs for most sources; data lake exists but no feature store; latency gaps | Programmatic access to all datasets; feature store operational; production-grade latency | ×2 |

| ML & data engineering | No ML/data engineering staff; no deployment experience; no pipeline tooling | Some ML experience but no production deployment; fragile or manual pipelines | Dedicated roles; production deployment experience; reliable automated pipelines | ×2 |

| Business integration | No model-to-action process; no product/ops involvement in AI planning | Stakeholders identified; ad hoc integration; manual handoffs | Clear model-to-action ownership; product and ops teams embedded in AI projects | ×1 |

| Governance & compliance | No AI governance; no decision authority; regulatory requirements unassessed | Framework drafted but not implemented; partial accountability; landscape partially mapped | Accountability assigned; risk management covers bias, drift, adversarial inputs; regulatory compliance verified | ×1 |

Scoring guide

Multiply each dimension score (1–3) by its weight, then sum. Maximum possible score: 24.

- 8–13 — Not ready. Critical gaps in foundational dimensions. Address data and capability gaps before committing to AI projects.

- 14–19 — Conditionally ready. Some dimensions support AI execution; others require targeted investment. Start with projects that depend on the ready dimensions while closing remaining gaps.

- 20–24 — Ready. All dimensions at or near full readiness. Proceed to project selection and execution.

Closing readiness gaps: realistic timelines

Each readiness dimension maps to specific remediation actions with predictable effort ranges. The table below provides planning-grade estimates — actual timelines depend on organisational size, existing infrastructure, and the severity of each gap.

| Readiness dimension | Score 1 → 2 | Score 2 → 3 |

|---|---|---|

| Data quality | Deploy profiling tools; fix critical missing-value issues in priority datasets. 4–8 weeks | Automate quality monitoring and remediation; standardise all formats; reduce missing values below 2%. 8–16 weeks |

| Data accessibility | Build extraction pipelines for priority legacy systems; deploy initial data lake. 8–16 weeks | Implement feature store and vector database; build real-time production-grade pipelines. 12–24 weeks |

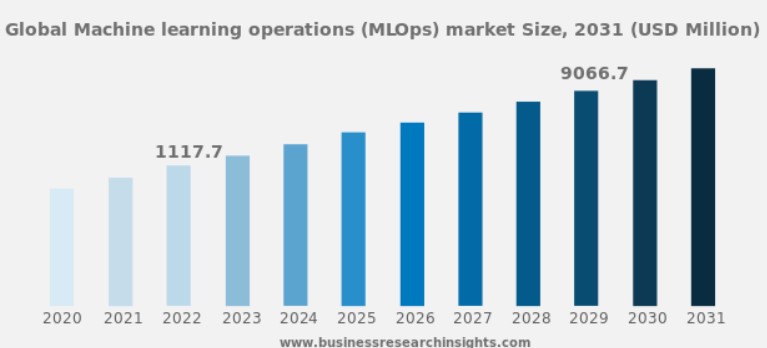

| ML & data engineering | Engage consultants with knowledge transfer; begin hiring first ML/data roles. 6–12 weeks (consulting) / 12–24 weeks (hiring) | Build dedicated team with production experience; establish automated pipelines and MLOps practices. 16–32 weeks |

| Business integration | Identify stakeholders per use case; define model-to-action workflows; run manual pilot. 3–6 weeks | Embed product/ops teams in AI projects; automate handoffs; establish outcome-to-retraining feedback loops. 8–16 weeks |

| Governance & compliance | Draft governance framework; assign decision authority; map regulatory landscape. 4–8 weeks | Implement risk management for bias, drift, adversarial inputs; verify regulatory compliance; establish audit cadence. 8–20 weeks |

Reading the table: Organisations scoring 1 in a dimension should plan for both columns sequentially — first reaching partial readiness, then closing the remaining gap. Organisations scoring 2 can proceed directly to the Score 2 → 3 column. Dimensions weighted ×2 in the scorecard (data quality, data accessibility, ML capability) should be prioritised first, as they are prerequisites for most AI projects.

What to do when you are not ready

“Not ready” is not a permanent state — it is a current state with a defined path to readiness. The readiness assessment produces the path: which gaps to close first, how to close them, and how long each gap will take to close.

The most common readiness gaps and their resolution paths:

- Data quality gaps: Implement data quality monitoring and remediation. Timeline: 2–6 months depending on the severity and number of affected datasets.

- Data accessibility gaps: Build extraction pipelines from legacy systems, negotiate data sharing agreements, implement API layers. Timeline: 3–12 months depending on the system complexity.

- ML capability gaps: Engage consultants with knowledge transfer, hire ML engineers, or train existing engineers. Timeline: 3–6 months for consulting, 6–12 months for hiring and training.

- Governance gaps: Develop an AI governance framework, define decision authority, implement risk assessment processes. Timeline: 2–4 months for framework development, ongoing for implementation.

The enterprise AI failure patterns are overwhelmingly caused by projects that started without addressing readiness gaps. The assessment prevents these failures by identifying and addressing the gaps before the project investment is committed.

If AI readiness has not been assessed across all three dimensions before committing to specific projects, an AI Project Risk Assessment evaluates the gaps and produces an actionable roadmap.