When is custom CV development actually justified?

Two equally expensive mistakes exist in computer vision deployment. The first: building a custom model when an off-the-shelf solution would have worked, burning months of engineering effort to achieve accuracy that a pre-trained model with minimal fine-tuning could have matched. The second: deploying an off-the-shelf solution that cannot handle the domain’s specific requirements, then spending months debugging a system whose fundamental limitation is that it was never designed for the use case.

Both mistakes are common. Both are preventable. The decision between custom and off-the-shelf is not a philosophical preference — it is an engineering assessment based on the specific characteristics of the use case, the available data, and the operational requirements.

Grand View Research (2024) values the global computer vision market at approximately $20 billion, with custom solution development accounting for a significant share of the market. Industry surveys suggest that a majority of organisations deploying CV in production use at least some custom model development, with the remainder using entirely off-the-shelf solutions.

What off-the-shelf gives you

Off-the-shelf computer vision solutions — cloud APIs (Google Vision, AWS Rekognition, Azure Computer Vision), pre-trained models (YOLOv8, EfficientDet, Segment Anything Model), and turnkey platforms (Roboflow, Landing AI, Clarifai) — provide a fast path from problem definition to working prototype. The value proposition is real:

Speed to prototype. A cloud API call returns detection results within minutes of configuration. A pre-trained YOLO model, fine-tuned on 500 labelled images, can achieve usable accuracy on common detection tasks within days. A turnkey platform with no-code annotation and training can produce a deployable model within a week. The time-to-prototype for off-the-shelf solutions is measured in days to weeks; for custom solutions, it is measured in months.

Breadth of capability. Pre-trained models have been trained on large, diverse datasets (COCO, ImageNet, Open Images) that cover a wide range of common objects, scenes, and visual patterns. For detection tasks that involve common objects — people, vehicles, animals, household items, retail products with standard packaging — off-the-shelf models have already learned useful feature representations. Fine-tuning from these representations requires less data and less training time than training from scratch.

Reduced engineering investment. Off-the-shelf solutions abstract away the model architecture selection, training infrastructure, hyperparameter optimisation, and serving infrastructure that custom solutions require. The engineering effort is focused on data preparation and integration rather than model development — which for many organisations is a more accessible skillset.

The limitation is equally clear: off-the-shelf solutions are optimised for common use cases. They handle variation that falls within their training distribution. They struggle — or fail — when the production task requires detection of domain-specific features that the training data did not include, when the operating environment differs systematically from the training conditions, or when the accuracy requirements exceed what fine-tuning on a pre-trained backbone can achieve.

When custom development is justified

Custom model development — designing or significantly modifying the model architecture, training from scratch or from a specialised backbone, and building custom training and serving infrastructure — is justified under specific conditions:

Domain-specific detection targets. If the objects or defects you need to detect do not appear in any standard dataset — and their visual characteristics differ enough from common objects that transfer learning is insufficient — custom development is necessary. Manufacturing defect types (micro-cracks on semiconductor wafers, contamination particles in pharmaceutical vials, texture anomalies on precision-machined surfaces) are rarely represented in general-purpose training datasets. The model’s feature representations must be learned specifically for these targets.

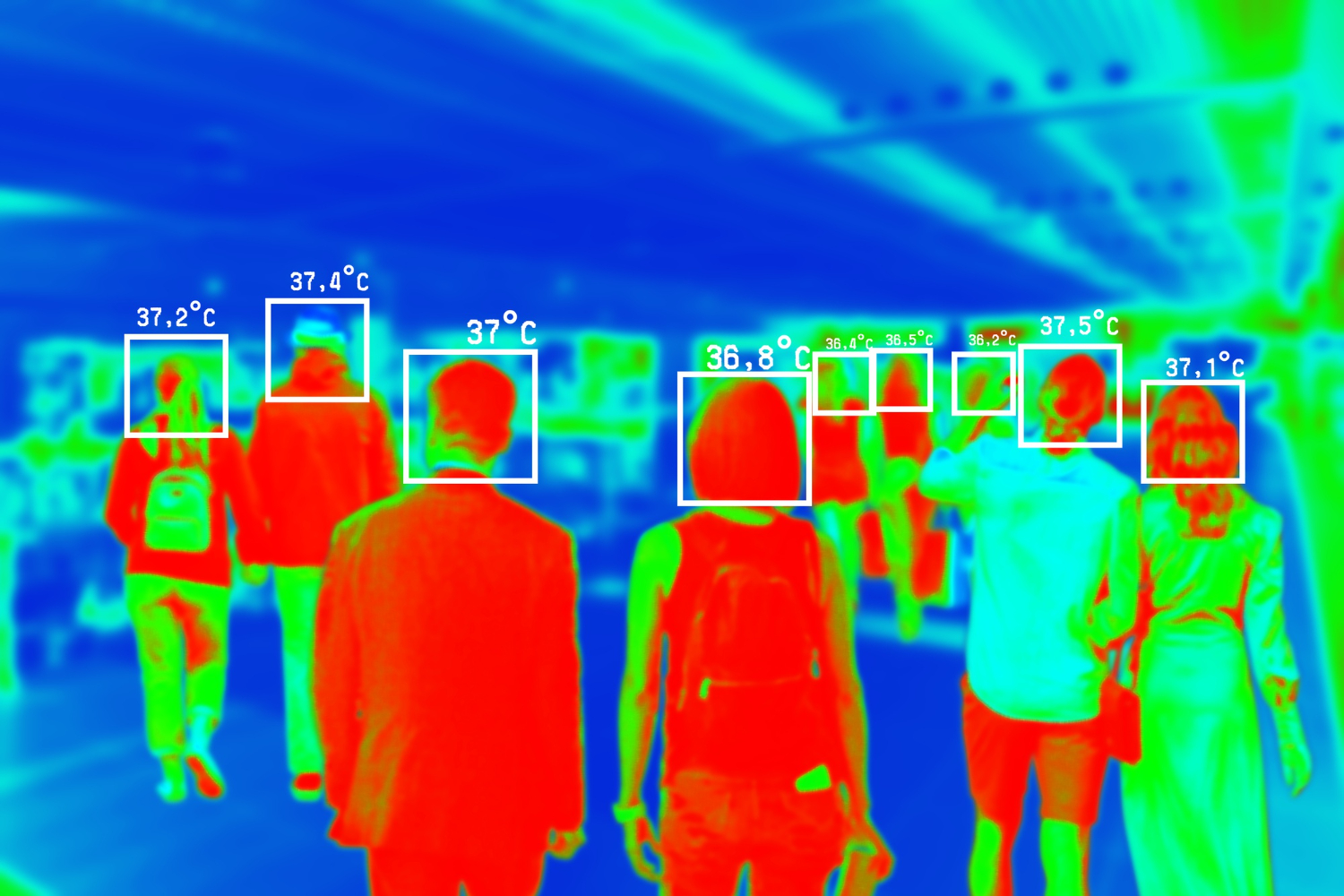

Environmental conditions outside the norm. If the operating environment produces images that differ systematically from the conditions in standard training datasets — non-visible spectrum (infrared, X-ray, hyperspectral), extreme lighting conditions, non-standard camera perspectives, or heavily occluded scenes — pre-trained models’ learned features may not transfer effectively. Custom development allows the model to learn features optimised for the actual imaging conditions rather than adapting features learned from natural images.

Accuracy requirements that exceed fine-tuning limits. Fine-tuning a pre-trained model on domain-specific data typically achieves 80–90% of the performance that custom development achieves, at 10–20% of the engineering cost. For many applications, 80–90% is sufficient. For applications where the remaining 10–20% has significant operational or safety impact — medical diagnosis, safety-critical inspection, regulatory-mandated detection rates — custom development is warranted.

Latency and deployment constraints. If the deployment target constrains model size and inference latency — edge deployment on resource-constrained hardware — a custom architecture designed for the specific hardware’s compute profile may significantly outperform a general-purpose architecture compressed to fit the same constraints. Custom architectures can optimise the accuracy-latency trade-off for the specific hardware, while off-the-shelf architectures must be generic enough to run on multiple targets.

The evaluation process

The decision between custom and off-the-shelf should follow a structured evaluation, not a technology preference:

Step 1: Define acceptance criteria. What accuracy metrics, at what thresholds, constitute an acceptable system? What latency is required? What false-positive and false-negative rates are tolerable? These criteria must be defined before evaluating any solution — otherwise, the evaluation has no objective basis for comparison.

Step 2: Test off-the-shelf first. Fine-tune a pre-trained model on your domain data. Evaluate against your acceptance criteria using production-representative test data, not a curated evaluation set. If the fine-tuned model meets the acceptance criteria, off-the-shelf is sufficient — proceed to deployment.

Step 3: Diagnose the gap. If the fine-tuned model does not meet acceptance criteria, analyse the failure modes. Are the failures caused by data quality issues (annotation inconsistency, insufficient training data, unrepresentative samples)? If so, improving the data — not switching to custom development — is the correct response. Are the failures caused by fundamental limitations of the pre-trained features (the model cannot detect the target features regardless of fine-tuning quality)? If so, custom development is justified.

Step 4: Scope the custom effort. Custom development does not mean building everything from scratch. It may mean designing a custom detection head on a standard backbone, training a specialised feature extractor for the domain, or building a multi-stage pipeline where some stages use off-the-shelf components and others are custom. We recommend scoping the custom effort to the minimum modification required to close the gap identified in Step 3 — anything beyond that minimum is engineering cost without corresponding accuracy benefit.

The total cost of ownership comparison

The upfront engineering cost favours off-the-shelf: lower development time, less specialised expertise required, faster time to deployment. The long-term operational cost comparison is more nuanced.

Off-the-shelf solutions that rely on cloud APIs carry ongoing per-inference costs that scale with volume. A system processing 100,000 images per day at £0.001 per image costs £36,500 annually in API fees — and the pricing is controlled by the vendor. Custom solutions have higher upfront development costs but lower marginal inference costs when self-hosted.

Maintenance complexity also differs. Off-the-shelf models maintained by a vendor receive updates and improvements automatically — but also receive changes that may affect your specific use case. Our teams have encountered situations where a cloud API’s model update changed detection behaviour for an edge case that a customer’s workflow depended on. Custom models require internal maintenance but provide full control over when and how the model changes.

The total cost comparison — upfront development, ongoing operation, maintenance, and risk — determines which approach is economically rational for the specific use case and deployment timeline.

When the build-vs-buy decision fails

Build decisions and buy decisions fail in structurally different ways. Recognising the failure pattern early determines whether the team can correct course or is locked into an escalating cost trajectory.

How build decisions fail

- Scope creep into infrastructure. The team starts building a detection model and ends up building training pipelines, annotation tools, serving infrastructure, and monitoring systems. The model development that was scoped at 3 months consumes 9–12 months because the supporting infrastructure was not in the original estimate.

- Data underestimation. The custom model requires more training data than projected, and collecting and annotating domain-specific data at sufficient quality takes longer than the model development itself. The project stalls in data preparation rather than model iteration.

- Maintenance burden transfer. The model works at launch, but the team that built it moves on. The model degrades over time as production conditions drift, and no one has the context or capacity to retrain and revalidate. The custom model becomes a legacy system within 12–18 months of deployment.

How buy decisions fail

- Accuracy ceiling. The off-the-shelf model achieves 85% of the required accuracy through fine-tuning, but the remaining 15% gap cannot be closed without architectural changes the vendor does not support. The team spends months on workarounds (post-processing hacks, ensemble approaches) that add complexity without closing the gap.

- Vendor lock-in and pricing shifts. A cloud API dependency becomes a cost problem at scale — the per-inference pricing that was negligible during pilot becomes a significant line item at production volume. Migrating away requires rebuilding the integration, which was the cost the buy decision was supposed to avoid.

- Silent model updates. The vendor updates their model, and detection behaviour changes for edge cases the customer’s workflow depends on. The customer discovers the change through production errors, not through a changelog — and has no control over rollback or version pinning.

These failure modes are avoidable with structured evaluation before commitment — a Production CV Readiness Assessment provides the build-vs-buy evaluation framework for computer vision applications.