How do you choose between distillation and quantisation for a multi-platform edge target?

A model that runs acceptably on a development machine needs to run in real time on iOS, Android, and browser targets. Both distillation and quantisation reduce the model to something that fits within mobile and edge memory budgets. They do not make the same tradeoffs, and choosing the wrong approach for a multi-platform deployment creates a hidden problem that appears after the first target ships: the model that validates correctly on iOS behaves differently on Android, and the browser implementation has quality characteristics that diverge from both.

The divergence is not a bug in any individual implementation. It is the expected consequence of applying precision reduction techniques independently to runtime-specific implementations of the same model, without a shared quality baseline across platforms.

What distillation and quantisation actually do

Distillation trains a smaller student model to replicate the behaviour of a larger teacher model. The student model has a different architecture — fewer layers, reduced hidden dimensions, or a simpler design — but is trained to match the teacher’s output distribution rather than just the ground-truth labels. The resulting model is smaller because it has fewer parameters, not because its parameters have been reduced in precision. A distilled model is a portable artefact: it can be exported to any runtime (CoreML, ONNX Runtime, TensorRT) and its behaviour is determined by its architecture and weights, not by the runtime’s precision implementation.

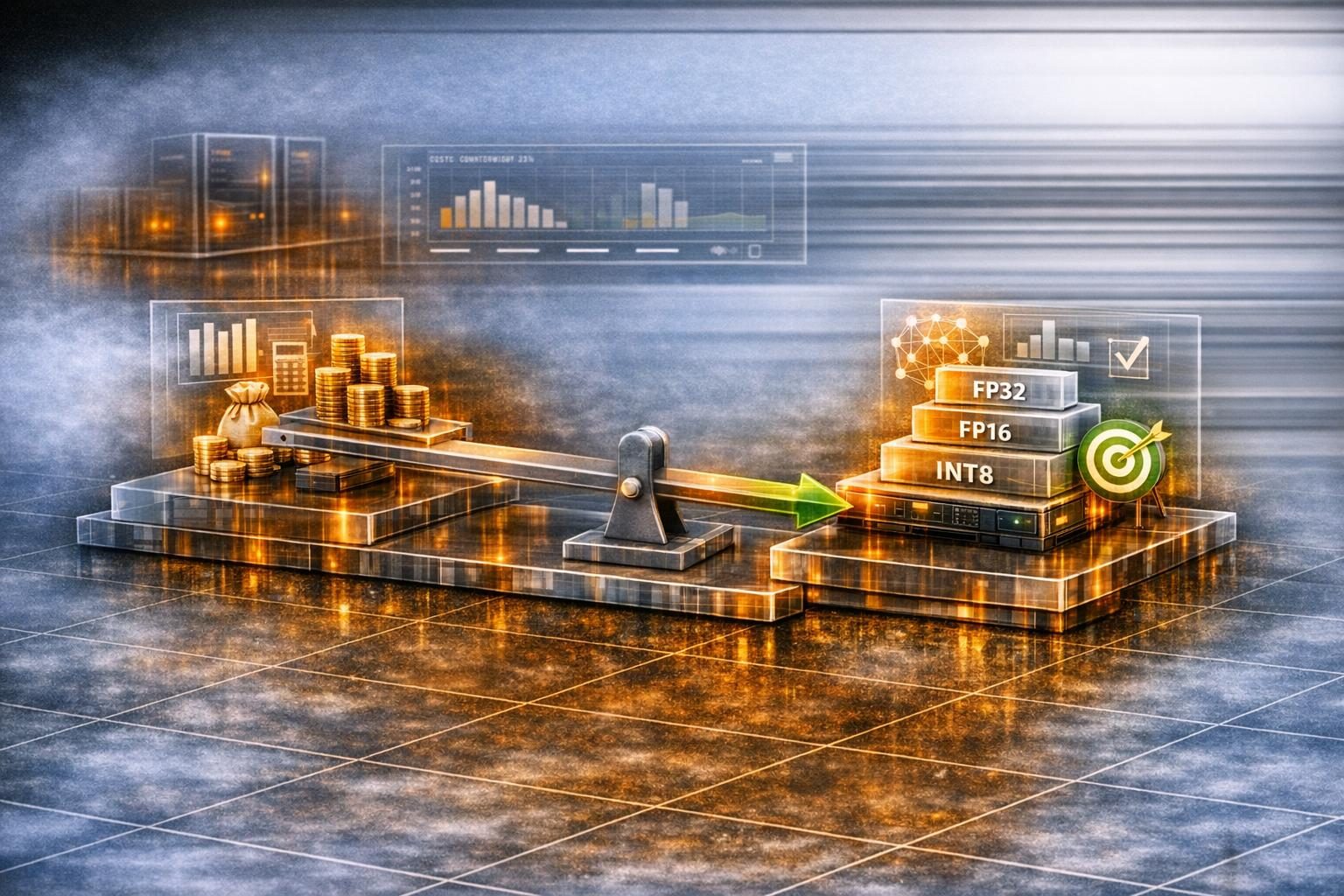

Quantisation reduces the numerical precision of an existing model’s weights and activations — typically from 32-bit floating point to 16-bit or 8-bit integers. The same model architecture is preserved, but the precision reduction means the numerical results differ slightly between the original and quantised implementations. Critically, INT8 quantisation on CoreML and INT8 quantisation on ONNX Runtime apply different quantisation schemes, different calibration methods, and potentially different operator implementations. A model quantised for CoreML is not the same numerical object as the same model quantised for ONNX Runtime, even if both are labelled “INT8.”

The pre-condition: device-capability profiling on the actual target hardware

Choosing between distillation and quantisation only makes sense once the team knows what the target devices can actually execute. The same GPU profiling methodology that diagnoses server-side bottlenecks applies to constrained inference targets — browser WebGL/WebGPU contexts, mobile NPUs, and edge accelerators — but the inputs change: median-device performance rather than peak hardware specs, runtime-version coverage across the deployment cohort, and the operator subset each runtime actually supports without falling back to CPU.

The device-capability baseline is a project-specific measurement, not an industry benchmark. Skipping it produces the failure mode discussed in why client-side ML projects miss latency targets before deployment: a model validated on the development machine fails to hit the latency budget on the median user device by a factor of 5–10× — an observed range across our edge-deployment engagements, not a guaranteed outcome — because the architectural decision was made against the wrong device profile. Establishing the baseline before the distillation-vs-quantisation decision is what lets the decision be made once rather than reversed after the first deployment cycle.

The platform-count decision criterion

| Situation | Recommended approach | Rationale |

|---|---|---|

| Single target platform | Quantisation | Platform-specific quantisation is well-documented, tooling is mature, quality validation is a single cycle |

| Two platforms with tolerance for minor quality divergence | Quantisation per platform, with cross-platform validation | Manageable if quality variation between platforms is acceptable to the use case |

| Three or more platforms | Distillation to a shared portable model | Quantisation per platform creates N independent validation cycles; quality divergence grows with N |

| Real-time quality consistency required across all targets | Distillation | Only distillation guarantees identical numerical behaviour across runtimes |

| Memory budget tight, accuracy threshold flexible | Quantisation | Quantisation achieves higher compression ratios than distillation for equivalent architecture |

| Memory budget adequate, quality threshold strict | Distillation | Distillation preserves quality more reliably across the precision boundary |

In a text-to-speech inference optimisation project on edge we ran — deploying an audio synthesis model to iOS (CoreML), Android (ONNX Runtime), and browser targets — the three-platform requirement made quantisation impractical. Separate INT8 quantisation for CoreML and ONNX Runtime produced audible quality differences at certain phoneme transitions that were acceptable on one platform and not on the other. The resolution was distilling the full-size model to a smaller architecture that could be exported directly to both runtimes from a shared set of weights, producing consistent audio quality across all targets. The distilled model ran within latency targets on the lowest-specification test devices in the deployment cohort.

The ONNX deployment architecture consideration

For models targeting ONNX Runtime across multiple platforms, the deployment decision is separate from the compression decision. ONNX functions as a cross-platform model exchange format: a model exported to ONNX runs on any ONNX Runtime-compatible environment without modification. This makes ONNX a natural choice for multi-platform deployments where the runtime environments are heterogeneous.

The key distinction is between ONNX-as-file-format (converting a model once for compatibility) and ONNX-as-deployment-architecture (designing the model export, versioning, and validation pipeline around ONNX Runtime as the canonical runtime). The latter requires cross-platform GPU performance portability thinking applied to inference targets: the model should be validated against all target ONNX Runtime versions in the deployment pipeline, not just the development version.

When distillation is combined with ONNX export, the validation burden reduces significantly: a single distilled model is exported to ONNX once, validated once against the ONNX Runtime specification, and deployed to all target platforms. CoreML targets receive the model through CoreML Tools’ ONNX import path, maintaining a single model artefact throughout the pipeline.

The distillation training procedure

Distillation is more involved than a quantisation pass; the team needs an explicit training procedure rather than a one-shot conversion. The procedure outlined below is the structure we use; specific hyperparameters depend on the task and the teacher–student capacity gap.

1. Capacity targeting. Choose the student architecture before training, not during. The student’s parameter count should be sized against the deployment memory budget on the lowest-tier target device, with a safety margin (we typically target 60–70% of the available budget to leave headroom for activations and runtime overhead). Smaller students need more training compute to close the quality gap; the smallest viable student is rarely the fastest path to an acceptable model.

2. Loss formulation. The standard distillation loss is a weighted sum of two terms: a task loss against ground-truth labels (cross-entropy for classification, L1 or L2 for regression, a domain-specific loss for generative models) and a distillation loss against the teacher’s outputs. For classification, the distillation loss is typically the KL divergence between the teacher’s and student’s softmax distributions, with a temperature parameter T (commonly 2–5) that softens the distributions to expose the teacher’s relative confidence over non-target classes. For regression and generative models, an L1 or L2 distance between teacher and student outputs is the standard form. The weighting between the two losses is tuned per task; a starting point is 0.5 * task_loss + 0.5 * (T * T) * distillation_loss, with the T*T factor compensating for the temperature scaling of gradients.

3. Layer alignment (optional but high-value). When the student architecture is similar enough to the teacher to permit it, adding intermediate-layer matching losses — typically L2 distance between selected hidden states of teacher and student at corresponding depths — accelerates convergence and improves the final quality of the student. This is the approach used by FitNets and the wider family of feature-distillation methods. Layer alignment requires deciding which student layer corresponds to which teacher layer; in practice, evenly spaced selections (student layer k/K matches teacher layer k/K of teacher depth) are a robust default. Hugging Face Transformers’ distillation recipes for DistilBERT and similar models implement this pattern and can be adapted as a reference.

4. Training data. Distillation benefits from training data that is broader than the original task training set, because the teacher provides supervision on every input regardless of whether ground-truth labels exist. Unlabelled data from the target domain — product images from the actual deployment environment, audio clips from the target user population, telemetry from the target device cohort — is high-value distillation data even without labels. The teacher’s outputs serve as the supervision signal.

5. Validation protocol. Quality validation should be conducted at three points: against the teacher (does the student match the teacher within the defined quality tolerance?), against the original task ground truth (does the student perform the task acceptably?), and against the deployment runtime (does the student, after export to CoreML / ONNX Runtime, produce numerically equivalent results to the PyTorch reference?). The third check is the one most often skipped and most often responsible for deployment-time surprises.

6. Iteration cadence. A first-pass distilled model rarely meets the quality target. Plan for at least three iteration cycles: initial training, quality assessment against the validation suite, and refinement of either the student architecture, the loss weighting, or the training data composition. Distillation projects that allocate time for one cycle and assume success ship under-trained students.

The hidden cost of the wrong choice

For a two-platform deployment, quantisation per platform is a reasonable choice and the validation cost is manageable. The hidden cost appears when a third target is added — a new device category, a new operating system version, or a new runtime — and the team discovers that the quantisation work done for the first two platforms does not transfer.

The device-baseline audit that precedes this decision — establishing which runtimes the target devices support, what quantisation schemes each runtime implements, and what the latency budget is across the device cohort — is the step that makes the distillation-vs-quantisation choice tractable. Without it, the choice is made on the wrong information. For teams approaching this decision for the first time, a GPU and Inference Optimisation Assessment evaluates the compression strategy against the platform count and quality requirements before implementation begins.