Why GPU-accelerating a simulation doesn’t always mean parallelising what you have

RF signal propagation simulation for tower placement is computationally expensive: the physics-based calculation of how signals attenuate, reflect, and diffract across terrain involves large spatial grids, irregular terrain data, and many-to-many path calculations between candidate tower positions and receiver locations. Running this on a CPU produces runtimes measured in hours or days, depending on grid resolution and the number of candidate configurations being evaluated.

The reflex response to this runtime problem is GPU acceleration: move the computation to a GPU, exploit the parallel execution model, and expect proportional speedup. In our experience with sequential simulation codebases ported naïvely, this approach typically produces gains in the range of 3–10× (an observed pattern from CPU-to-GPU port engagements, not a guaranteed outcome) — meaningful, but not transformative. The reason is that most simulation algorithms are not written for parallelism. They are written for correctness on sequential hardware, and their internal dependencies prevent parallel execution of most work.

The transformative result — turning multi-day runtimes into hours — requires something different: algorithmic redesign before GPU porting.

The profiling step that identifies what can actually be parallelised

Before any GPU porting begins, the critical question is whether the algorithm’s internal dependency structure permits parallelism. An algorithm with deep sequential dependencies — where step N depends on the result of step N-1 — will not benefit significantly from GPU parallelism regardless of how the porting is done.

GPU kernel profiling applied to simulation workloads serves the same diagnostic function it serves for neural network inference: it identifies which operations dominate execution time and whether those operations are compute-bound, memory-bound, or serialisation-bound. For RF signal propagation, profiling typically reveals that the dominant operation — path loss calculation across terrain grids — is embarrassingly parallel in principle but implemented with sequential data access patterns that prevent the GPU from scheduling the work efficiently.

The three-phase approach to simulation acceleration

The path from multi-day CPU runtimes to hours of GPU computation follows a consistent three-phase structure:

| Phase | Activity | What it produces |

|---|---|---|

| 1. Algorithm audit | Profile the sequential implementation; identify the top-3 execution-time operations; assess each for parallelism potential (independent work items, data dependency structure, memory access patterns) | A prioritised list of operations: parallelisable as-is, parallelisable with restructuring, inherently sequential |

| 2. Algorithmic restructuring | For operations in category 2 (parallelisable with restructuring): redesign the algorithm to expose the parallelism the GPU can exploit. This typically involves reformulating sequential iteration as batch operations, replacing recursive patterns with closed-form approximations where the physics permits, and restructuring data layouts for coalesced GPU memory access | A restructured algorithm with a significantly higher fraction of work that is parallelisable |

| 3. GPU implementation | Port the restructured algorithm to CUDA or OpenCL; validate correctness against the sequential reference; measure GPU utilisation to confirm the restructuring achieved the expected parallelism | A validated GPU implementation with measurable speedup |

In the CloudRF signal propagation and tower optimisation work we carried out, the algorithm audit identified that the terrain-based path loss calculation — which dominated execution time — had sequential dependencies introduced by an iterative grid-walking approach that was not required by the underlying physics. The RF propagation equations themselves are spatially independent: the path loss from a transmitter to any receiver point depends on the transmitter position and terrain profile between them, not on the calculation results for adjacent receiver points. The sequential implementation had introduced artificial dependencies by reusing intermediate results from neighbouring cells as an optimisation — an optimisation that was correct on sequential hardware but prevented parallelisation.

Restructuring the algorithm to calculate each receiver point independently — using a slightly more expensive but fully parallel calculation — removed these dependencies and exposed the work to GPU execution. The resulting implementation reduced simulation runtimes from multiple days to hours for production-scale terrain grids.

Why algorithmic restructuring typically outperforms kernel tuning for simulations

The algorithmic restructuring vs kernel tuning decision is particularly clear-cut for simulation workloads. Kernel tuning — optimising the CUDA implementation of a given algorithm — improves the performance of a computation that is already structured correctly for GPU execution. For a simulation algorithm that was designed for sequential execution, kernel tuning has a low ceiling: you can improve the efficiency of each sequential step, but you cannot parallelise the work if the algorithm’s structure prohibits it.

Algorithmic restructuring, by contrast, changes what fraction of the total computation can be parallelised. For simulations where the dominant operations are mathematically independent but have been implemented with sequential data structures, restructuring to expose that independence can shift GPU utilisation from the 15–20% range typical of naïve ports into the 70–90% range achievable when the work matches the hardware execution model (figures observed across our GPU acceleration engagements; the specific lift depends on the original algorithm’s dependency structure).

The implication is that the ROI on GPU hardware for simulation workloads is not determined by the hardware specification — it is determined by whether the algorithm has been designed to exploit parallel execution. The hardware is a prerequisite, not the variable.

The algorithm audit checklist: what to look for in the code

The algorithm audit (Phase 1) is the highest-leverage activity in the entire acceleration project. The following checks, applied to the existing sequential implementation, identify whether the dominant operations are restructurable and what the restructuring will involve.

| # | What to look for in the code | What it indicates | Restructuring response |

|---|---|---|---|

| 1 | Loops over a spatial grid where iteration i reads results written in iteration i-1 (forward sweeps, marching schemes) |

Artificial sequential dependency — may or may not be required by the underlying mathematics | Inspect the physics: if the equation at each cell depends only on inputs (not on neighbour results), the dependency is an implementation artefact and can be removed |

| 2 | Recursive function calls in the inner loop | Sequential dependency by construction; difficult to parallelise directly | Rewrite as iterative computation with explicit state, or replace with a closed-form approximation where the physics permits |

| 3 | Accumulator variables that aggregate across many work items (sum, max, min over the full grid) | Reduction pattern — parallelisable but requires care | Replace with GPU-friendly parallel reduction (CUB, Thrust, or hand-rolled tree reduction in CUDA) |

| 4 | Indirect memory access via index arrays (output[i] = compute(input[index[i]])) |

Memory access is uncoalesced — GPU bandwidth will be the bottleneck even after parallelisation | Restructure data layout to make the access pattern contiguous, or accept the bandwidth penalty if restructuring is more expensive than the gain |

| 5 | Conditional branches inside the inner loop where branch direction varies between adjacent work items | Warp divergence — GPU threads in the same warp will serialise across the branches | Sort or partition work items by branch direction before dispatch, so each warp executes a single branch |

| 6 | Calls to library functions (FFT, linear solve, sparse matrix-vector multiply) | Already-optimised primitives are available on GPU | Replace with cuFFT, cuSOLVER, cuSPARSE, or equivalent; do not re-implement |

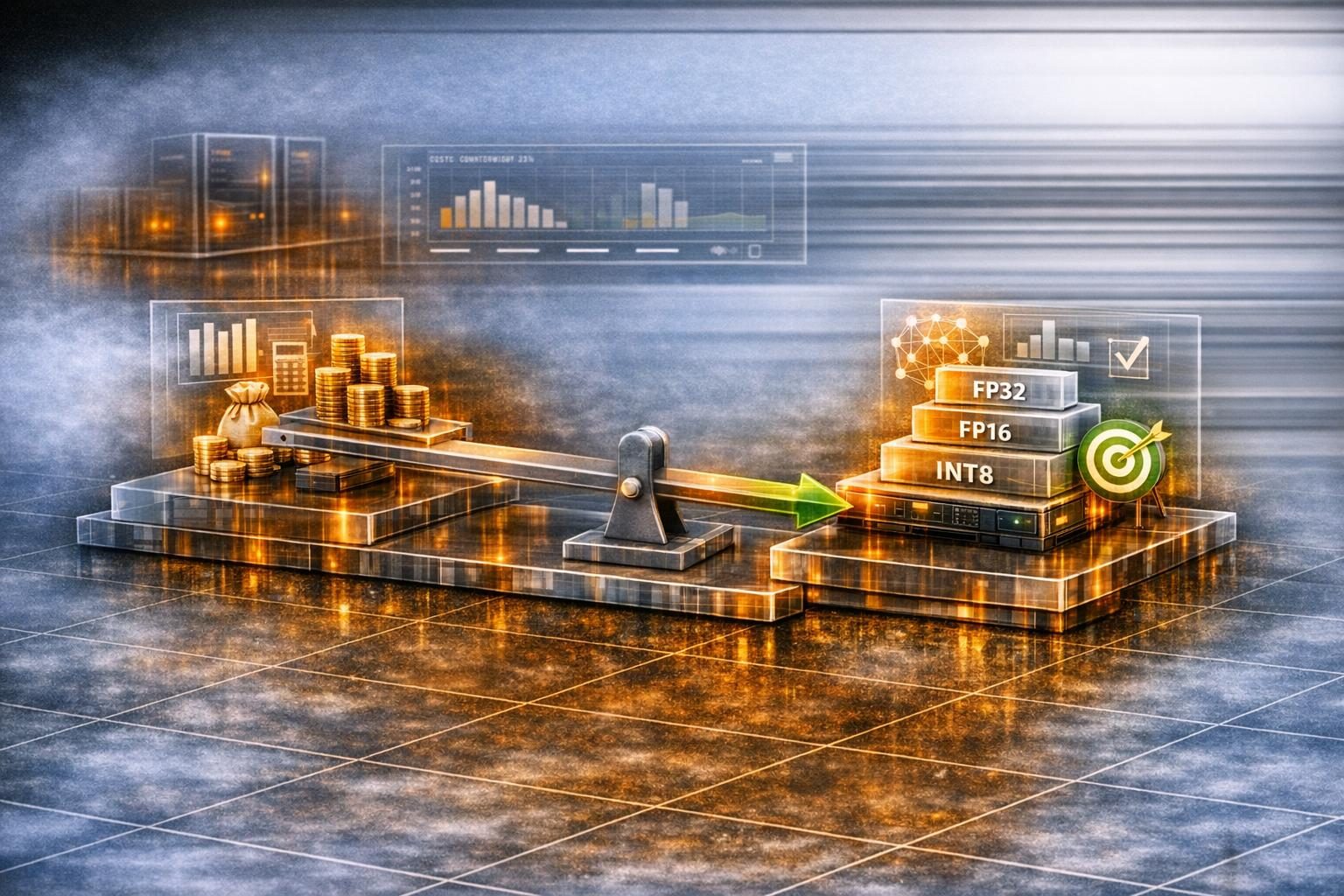

| 7 | Floating-point precision assumed to be 64-bit throughout | Default precision may exceed what the physics requires | Quantify the precision the physics actually requires; if 32-bit is sufficient, the GPU throughput gain is approximately 2× with no algorithmic change |

| 8 | Disk I/O or host-device transfers inside the hot loop | Transfer cost may dominate computation cost | Move I/O outside the loop; batch transfers; use pinned memory and asynchronous copies |

A codebase where checks 1–3 reveal artificial sequential dependencies is a strong candidate for the algorithmic restructuring path — the gain ceiling is high. A codebase where the dominant operations are inherently sequential (check 2 with no closed-form alternative) is a candidate for hybrid CPU-GPU execution rather than full GPU porting; the team should plan accordingly rather than discovering this after weeks of porting effort.

What remained imperfect

The restructured CloudRF implementation achieved its target throughput, but two limitations were intrinsic to the approach and remain worth naming for any team planning a similar acceleration:

First, the restructured calculation is slightly more expensive per receiver point than the original sequential implementation. The original code reused intermediate results from neighbouring cells precisely because doing so saved arithmetic. Removing the reuse to enable parallelism increased the total work performed; the speedup comes from doing more work in parallel, not less work overall. On hardware with limited parallelism (a small GPU, or a CPU fallback path), the restructured algorithm runs slower than the original. The implementation maintains both code paths for this reason.

Second, the path loss model itself is an approximation of the underlying electromagnetic physics. The acceleration project did not change the physical fidelity of the simulation — it changed the runtime at fixed fidelity. Higher-fidelity propagation models (full-wave electromagnetic solvers, ray-tracing with diffraction modelling) remain orders of magnitude more expensive than the path loss approach and were out of scope. Teams that need higher physical fidelity will face a larger engineering project than the one described here.

Planning throughput as the business metric

The practical value of reducing a tower-placement simulation from multi-day to hours is not the raw runtime number — it is the planning throughput multiplier. As an operational measurement from the CloudRF signal-propagation deployment: if a planning cycle previously evaluated 5 candidate tower configurations per week (limited by simulation time), a 20× speedup enables 100 configurations per week (project-specific measurement from that deployment, not a benchmarked industry rate). That multiplier changes the scope of what can be optimised: instead of selecting between a small number of candidate positions, the team can evaluate a large configuration space and converge on genuinely optimal placements.

For any organisation running simulation workloads on CPU infrastructure — signal propagation, physics simulation, financial risk modelling, climate modelling — the GPU audit question is not “will GPU make this faster” but “what has to change in the algorithm before GPU acceleration can deliver the speedup that the hardware is capable of?” A GPU Audit assesses the current algorithm structure and identifies whether the path to acceleration runs through GPU porting alone or requires algorithmic redesign first.