Augmented reality has grown from a niche concept into a common feature of digital life. Many people use it without noticing it. They place digital furniture in their living room or try on glasses with an AR app.

This guide shows how augmented reality works and how AR technology adds digital content to the real world. The goal is to keep everything clear, easy to follow, and helpful for daily use.

What Augmented Reality Means Today

Augmented reality adds digital elements to physical worlds. These can be text, 3D models, audio or other computer generated details that look like part of real life.

Augmented reality adds digital objects to real settings at the same time (Craig, 2013; Milgram & Kishino, 1994). This is why many experts see it as part of mixed reality, where digital and physical layers can interact.

The main idea is easy: you look around through an AR device, and digital content appears in front of you. These digital elements stay in place even when you move, which makes the scene feel natural. Modern ar systems make this possible through advanced sensors, computer vision, and fast graphics processing (Schmalstieg & Hollerer, 2016).

The growing interest in augmented reality comes from how flexible it is. People use it in shopping, education, gaming, health care and industry. It enhances the user experience by focusing on real surroundings instead of a fully virtual space.

The Core Parts of AR Technology

To understand how does it work, it helps to look at the main components inside an ar device. While the design can vary, the core structure tends to stay the same across phones, tablets and specialist headsets.

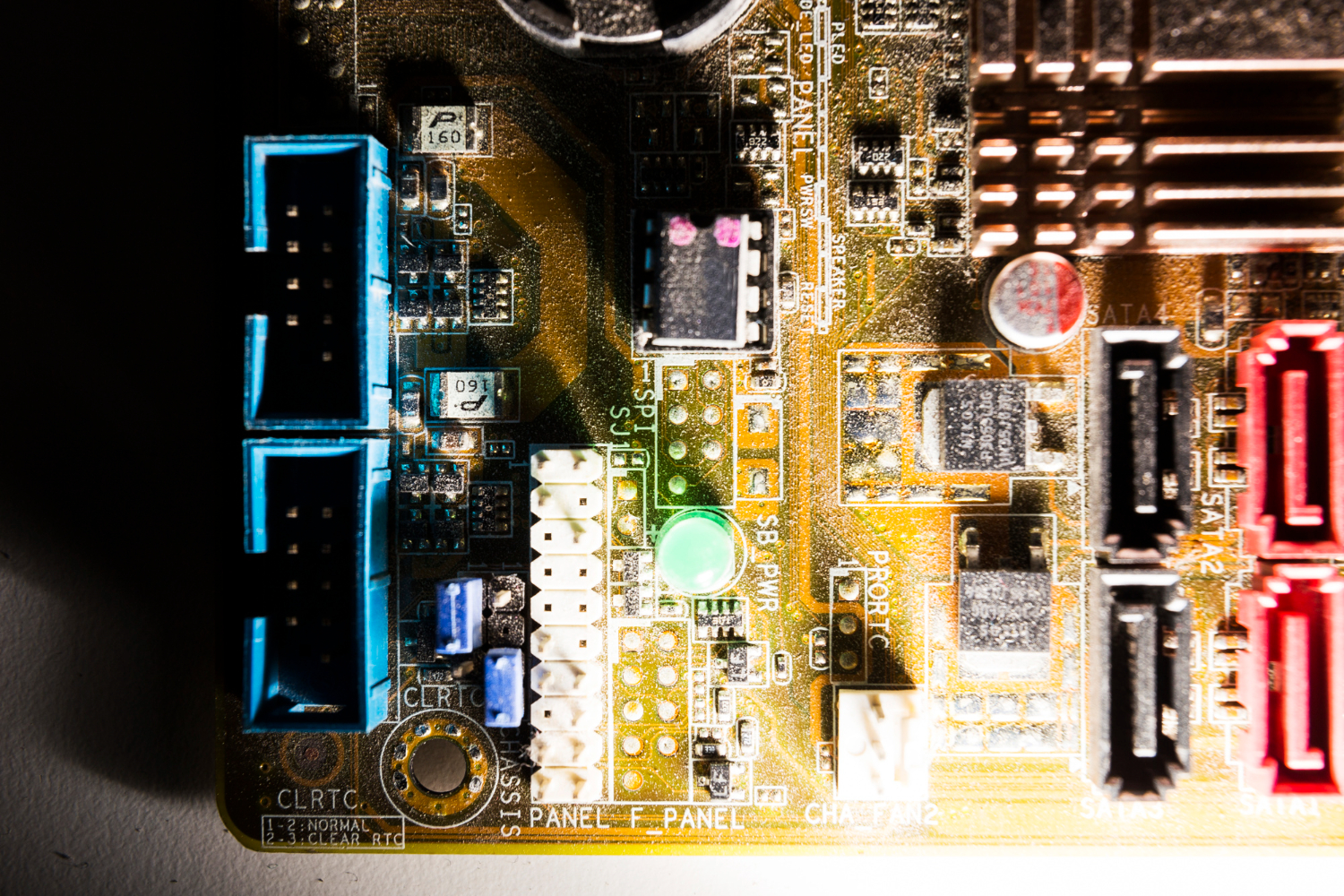

First, sensors track the real world environment: these often include cameras, motion sensors, gyroscopes and depth sensors. Cameras collect visual information, while motion sensors measure how the device moves (Zhang, 2021). Depth sensors judge how far objects are, which helps place digital content in the correct position.

Next, AR systems interpret the scene, they detect surfaces, edges, lighting and patterns. This process, known as computer vision, allows the device to build a model of the physical world. With this model, the AR application can place digital objects on the floor, on a table or even on a moving target.

After this, the system generates digital elements, which might be 2D labels or 3D shapes. The software renders them so that they match the lighting and angle of the room. When done well, the effect feels natural and stable.

Finally, the display shows the combined view of real life and digital content. AR glasses place the image on transparent lenses so the user sees both layers at once. All this happens in real time, which is why the hardware needs to react fast without lag.

These steps repeat many times per second. Even slight delays can reduce immersion or cause discomfort, especially in applications that involve movement.

How AR Applications Interact with the Real World

Most people meet augmented reality through an AR app. For example, furniture viewing tools help people check how a chair would look in a room.

Gaming apps place digital characters on streets or parks. Language apps add translations over signs. All these applications rely on a simple workflow: detect, track, place and update.

Detection is the moment the device notices a flat surface or an image target. Tracking means it keeps the object steady in the frame even as the user moves. Once that happens, the AR application places digital objects at a fixed point. These stay in the same place unless the user moves closer or further away.

The ability to interact with the real world is what makes augmented reality different from traditional animations. Users can walk around a digital model, change its size or give commands. Some AR technology also recognises gestures. When the system responds instantly, the result feels like a natural extension of everyday activity (Jerald, 2015).

The accuracy of this process depends on the quality of the sensors and the algorithms. High‑end AR glasses tend to give a stronger sense of presence because the view is wider and more stable. Mobile devices still offer strong performance thanks to rapid improvements in chip design and camera quality.

The Role of Mixed Reality and Its Connection to AR

Mixed reality often causes confusion because it sits between augmented reality and virtual reality. The idea covers any experience where digital and physical layers interact. In practice, augmented reality is a large part of mixed reality.

Both add digital content to the real world. Mixed reality can do more because it lets digital objects react to the world around you.

These technologies try to give you an immersive experience while keeping you in the real world. Many researchers treat augmented reality and virtual reality as two ends of a spectrum (Milgram & Kishino, 1994). Mixed reality fills in the space between them.

This broad view helps designers create solutions for real life situations. For example, training tools can overlay step‑by‑step instructions on machinery. Doctors can see digital markers during procedures. Students can view models of planets or molecules on their desks.

Digital Elements and User Experience in Modern AR

The user experience in augmented reality depends on how clearly digital elements fit the surroundings. If a 3D model floats or shifts as the camera moves, it breaks the effect. Strong AR systems keep digital objects steady even during fast movement. Good lighting, consistent shadows and accurate depth handling all contribute to realism.

Developers also aim to keep interactions simple. Most users understand basic actions such as pinching to resize or tapping to rotate. Clear instructions help people avoid confusion, especially in complex environments. Designers must also consider how long people will use the AR application, as long sessions on AR glasses or phones can feel tiring.

Digital content must add value rather than distract. Well‑designed solutions support tasks without blocking the view. This point becomes important in professional settings, where precision matters and mistakes can cause harm. Reliable design guidelines help teams maintain quality across different devices.

The Future of AR Devices and Real‑World Integration

The future of augmented reality will depend on lighter AR devices, longer battery life and better displays. Several companies now focus on AR glasses that look more like ordinary eyewear. These glasses aim to blend digital information with real life in a natural and unobtrusive way (Zhang, 2021). Improvements in chip efficiency also allow AR technology to run faster without getting too hot.

As more industries adopt AR, the need for consistent standards grows. Research groups and international bodies have begun to set guidelines for safety, accuracy and usability (IEEE, 2023). These standards help developers build reliable solutions while keeping user needs at the centre.

How TechnoLynx Can Support Your AR Goals

TechnoLynx helps organisations bring augmented reality ideas to life through tailored solutions. Our team works with clients who want to improve training, design, customer engagement or internal workflows using digital elements that fit smoothly into real environments. We focus on clear communication, careful analysis and practical design choices that support long‑term growth.

If you want to create or refine an augmented reality project, contact TechnoLynx today and let us help you build a reliable and effective AR solution.

References

-

Craig, A.B. (2013) Understanding Augmented Reality: Concepts and Applications. Morgan Kaufmann.

-

IEEE (2023) IEEE Standard for Virtual and Augmented Reality: Terminology and Standards Guidelines. IEEE Standards Association.

-

Jerald, J. (2015) The VR Book: Human-Centered Design for Virtual Reality. ACM Books.

-

Milgram, P. & Kishino, F. (1994) ‘A taxonomy of mixed reality visual displays’, IEICE Transactions on Information and Systems, E77-D(12), pp. 1321–1329.

-

Schmalstieg, D. & Hollerer, T. (2016) Augmented Reality: Principles and Practice. Addison‑Wesley.

-

Zhang, Z. (2021) ‘Advances in camera tracking and sensor fusion for augmented reality’, IEEE Transactions on Visualization and Computer Graphics, 27(5), pp. 2535–2547.

Image credits: Freepik