Why operators stop trusting automated surveillance alerts

When an operator receives a CV-driven alert that turns out to be wrong, the operationally important question is not “was the model confident?” but “which stage of the pipeline produced this wrong answer, and why?” In a monolithic CV-to-alert pipeline, that question has no answer. The alert arrives with a single confidence number; the operator cannot tell whether the detection model misclassified, the temporal filter missed a context cue, the zone rule fired on a pixel boundary, or all three contributed. After enough unattributable wrong answers, the operator stops trusting alerts as a category — not because the model is bad but because the pipeline cannot explain itself.

This is the failure that observability is designed to prevent. The false-alarm reduction work in the sibling article addresses whether the alert should fire; this article addresses whether the operator can trace why it fired once it has. Both problems must be solved for an automated surveillance system to remain operationally useful past its first quarter of deployment.

Restoring traceable trust requires architecture, not better models.

What observability means in a CV pipeline

An observable pipeline is one where every decision is attributable: given an alert, a security operator can trace which pipeline stage produced it, what evidence each stage contributed, and where in the pipeline the decision would look different if the environment or thresholds changed.

This is distinct from explainability in the AI sense (understanding why a neural network produces a specific output). Pipeline observability is an operations engineering property: it is about decomposing the pipeline into stages with defined inputs, defined outputs, and measurable confidence at each transition.

The stages of a production surveillance CV pipeline that supports observability:

| Stage | Function | Observable signal |

|---|---|---|

| Detection | Localise potential subjects of interest in the frame (persons, vehicles, objects) | Bounding box coordinates, detection confidence score, detection model version |

| Classification | Identify what has been detected (person, vehicle type, action class) | Class label, per-class confidence, classification model version |

| Temporal context | Assess whether the detected event is consistent with its context (duration, trajectory, zone history) | Event duration, trajectory plausibility score, zone context flags |

| Spatial validation | Assess whether the location and geometry of the detection are consistent with physical constraints | Zone boundary check, height/proportion plausibility, stereo consistency (where multi-camera is available) |

| Rule evaluation | Apply operational rules (person in restricted zone, vehicle stationary > N minutes) | Rule name, rule parameters, rule evaluation result, override flags |

| Alert routing | Route qualified events to operator queues with priority and context information | Alert priority, evidence bundle (frame crops, detection history, stage confidence), queue assignment |

Each stage has a defined interface with the next. A false positive from the alert routing stage can be traced back: was it a detection error (stage 1), a classification error (stage 2), a temporal context failure (stage 3), or a rule configuration mismatch (stage 5)? Without this decomposition, the root cause is “the model was wrong,” which provides no actionable information for fixing the system.

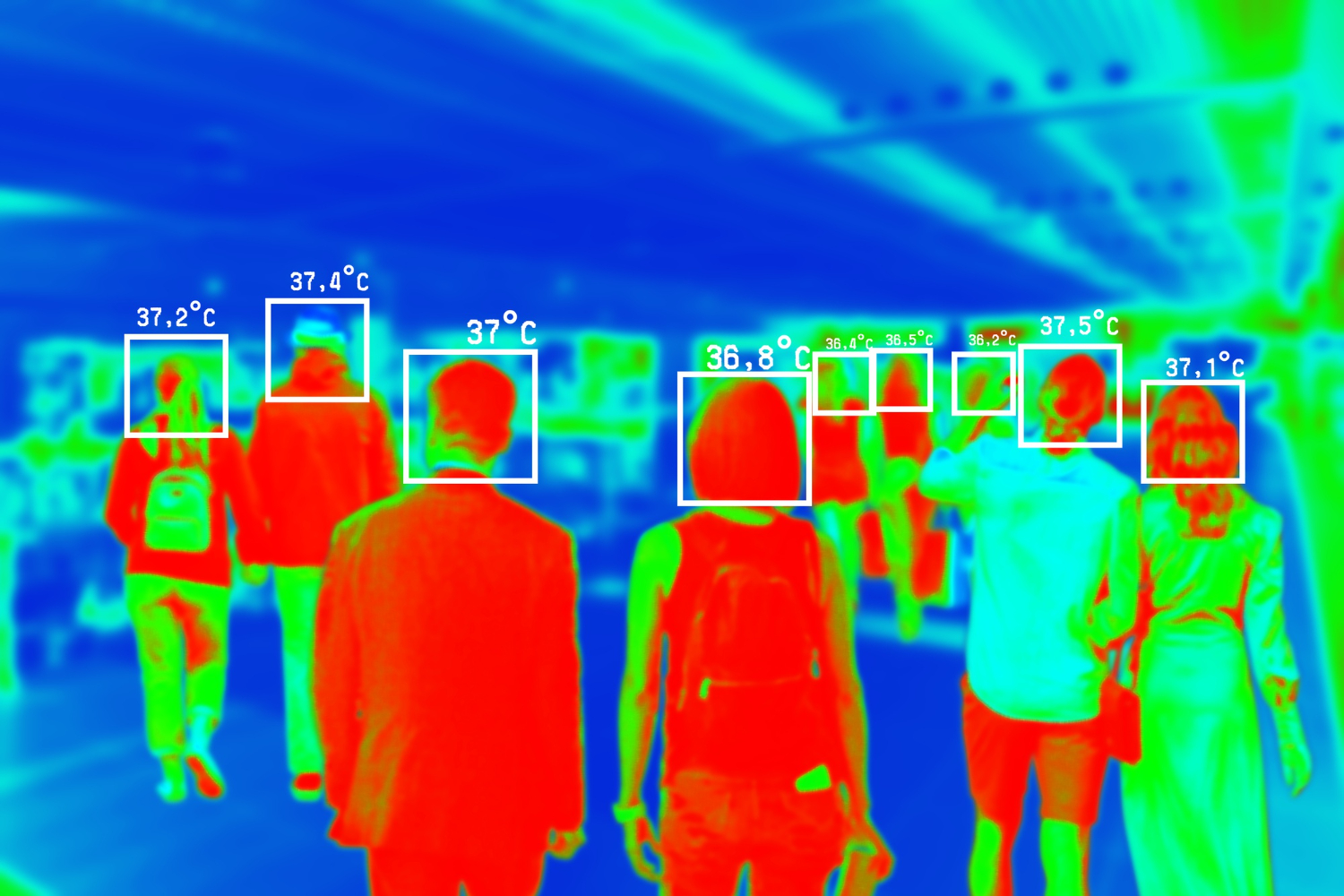

Multi-camera continuity as a confirmation stage

For events that span multiple camera views — a subject moving through a site, a vehicle tracked across a perimeter — multi-camera tracking provides a confirmation signal that single-camera pipelines cannot. A detection in a single camera that is ambiguous (partially occluded subject, borderline confidence) becomes unambiguous when correlated with a detection in a second camera covering a non-overlapping zone.

In the multi-target multi-camera tracking system we developed for a logistics security environment, the pipeline used a probabilistic trajectory model to link detections across non-overlapping camera views. A detection on camera A that was below the alert threshold in isolation could qualify for alerting when the trajectory model assigned high probability to a matching detection on camera B at the expected time and location. Conversely, a high-confidence detection on camera A that had no trajectory continuation on camera B was downweighted as a candidate alert, catching a class of false positives that single-camera confidence scoring cannot address.

This architecture means that the observable signal at the multi-camera stage is not just “detection confirmed” but “detection corroborated by trajectory model with probability P, based on N linked observations across M cameras.” That is information an operator can evaluate and act on.

Zone-aware threshold configuration

A production CCTV deployment covers heterogeneous zones: high-traffic public areas, low-traffic restricted zones, outdoor perimeters with variable lighting, indoor areas with controlled conditions. A single confidence threshold applied uniformly across all zones will be miscalibrated for most of them.

Zone-aware threshold configuration sets per-zone confidence thresholds based on the empirical false-positive and false-negative rates of that specific camera zone in production. A camera covering a busy public entrance with frequent benign motion events requires a higher confidence threshold to avoid alert saturation. A camera covering a restricted server room with very low expected motion events can use a lower threshold without generating significant false positives.

Implementing per-zone configuration requires the pipeline to track false-positive and false-negative rates per camera zone over time — which is only possible in an observable pipeline where each alert’s provenance is recorded. This is a direct operational return on the investment in pipeline observability: the pipeline becomes self-calibrating as it accumulates data.

The pipeline-stage testing matrix

Observability is not only a runtime property; it is also a testing property. Each stage of the pipeline carries different testing responsibilities, and conflating them — testing only end-to-end, or testing only individual model components — leaves predictable failure modes uncovered. The matrix below names the test responsibilities per stage and the tooling that supports them.

| Stage | Unit test responsibility | Integration test responsibility | System test responsibility |

|---|---|---|---|

| Detection | Per-class precision and recall on a fixed labelled image set; bounding-box IoU against ground-truth annotations. Frameworks: PyTorch test harness with the validation split; OpenCV-based annotation comparison. | Detection model integrated with the pre-processing pipeline (frame decode, resize, normalisation) using NVIDIA DeepStream or a custom GStreamer-based pipeline; assert that pre-processing changes do not silently degrade per-class metrics. | Detection running against a recorded production stream replay; assert sustained throughput at the deployed frame rate, no memory growth over a 24-hour replay. |

| Classification | Top-1 and top-3 accuracy on a fixed labelled crop set, including hard-negative crops; per-class confidence calibration (ECE, reliability diagram). Frameworks: PyTorch test harness; scikit-learn calibration utilities. | Classification model fed by detection bounding boxes (not by ground-truth boxes); assert that detection localisation noise does not collapse classification accuracy. | Per-class accuracy monitoring on production traffic with sampled operator labels; alert on per-class accuracy regression beyond a defined window. |

| Temporal context | Trajectory plausibility scoring against synthetic trajectories with known plausibility; temporal smoothing window correctness on simulated detection sequences. | Temporal context fed by live classifier output; assert that classifier confidence drops are reflected in temporal stability metrics. | Temporal aggregator output stability over a 24-hour replay; assert that aggregator state remains bounded under a worst-case detection burst. |

| Spatial validation | Zone boundary check against synthetic detections at zone edges; height/proportion plausibility against synthetic crops with known dimensions. | Spatial validation fed by live detection coordinates after camera calibration; assert that calibration drift is caught by the validation stage. | Cross-camera consistency check using overlapping camera zones; assert that the same physical event produces consistent spatial validation results across cameras that see it. |

| Rule evaluation | Each rule tested independently against synthetic event streams that exercise its true and false branches. Tooling: standard unit test framework (pytest, JUnit), no ML required. | Rule engine integrated with the upstream pipeline; assert that rules fire on synthetic events injected into the live stream. | Rule firing rate per rule per camera zone monitored in production; alert on sudden changes in rule rejection or firing rate. |

| Alert routing | Priority assignment, queue routing, evidence bundle assembly tested against synthetic alerts. | Alert routing integrated with the operator workflow tool; assert that evidence bundles are complete and consumable by the downstream operator UI. | Operator dismissal rate per alert type tracked in production; alert on dismissal rate exceeding a defined threshold per alert category. |

| End-to-end | Not applicable as a unit test responsibility. | Full pipeline against a small validated event corpus; assert that the alert decisions for the corpus match expectations. | Full pipeline against recorded production streams; assert sustained throughput, latency budget, and operator-acceptable false-positive and false-negative rates over a measurement window. |

The failure mode the matrix is designed to expose: a model that passes its unit tests but fails when integrated with the pre-processing pipeline (input distribution shift), a rule that passes its unit tests but fails because it is fed by an upstream stage whose output format changed, an end-to-end pipeline that produces correct alerts in test conditions but exceeds the latency budget under sustained production load. Each of those is a recurring failure mode in production CV systems and each is caught by a test category that already exists — the matrix names which category catches which mode.

The connection to pipeline modularity

Observable pipelines and modular CV pipeline design are the same architectural principle applied to the surveillance domain. The modular pipeline can be independently tested at each stage, allowing the team to validate, for example, that the detection stage has acceptable recall on the target subject categories before evaluating the classification stage’s precision.

The false alarm reduction problem is fundamentally solved at the architecture level, not the model level. A modular observable pipeline creates the conditions under which false alarm rates are measurable, attributable, and reducible. A Production CV Readiness Assessment evaluates whether an existing surveillance CV architecture has the stage decomposition and observability instrumentation that sustained low false-alarm operation requires.